Philosophy Job Placement Data Update

Carolyn Dicey Jennings (UC Merced) posts that the final report for the Academic Placement Data and Analysis project is complete. She’ll eventually be posting more about it, but I repost some of the information from the report below. One thing worth noting is that though 169 programs were contacted, only 87 added or updated information to the project database. If you are at a PhD-granting philosophy program, consider checking whether your department took part, or discussing it at your next meeting.

Clearly, a lot of work went into this project, and I encourage readers to look over the full report. Here are some findings (with images reposted from the report):

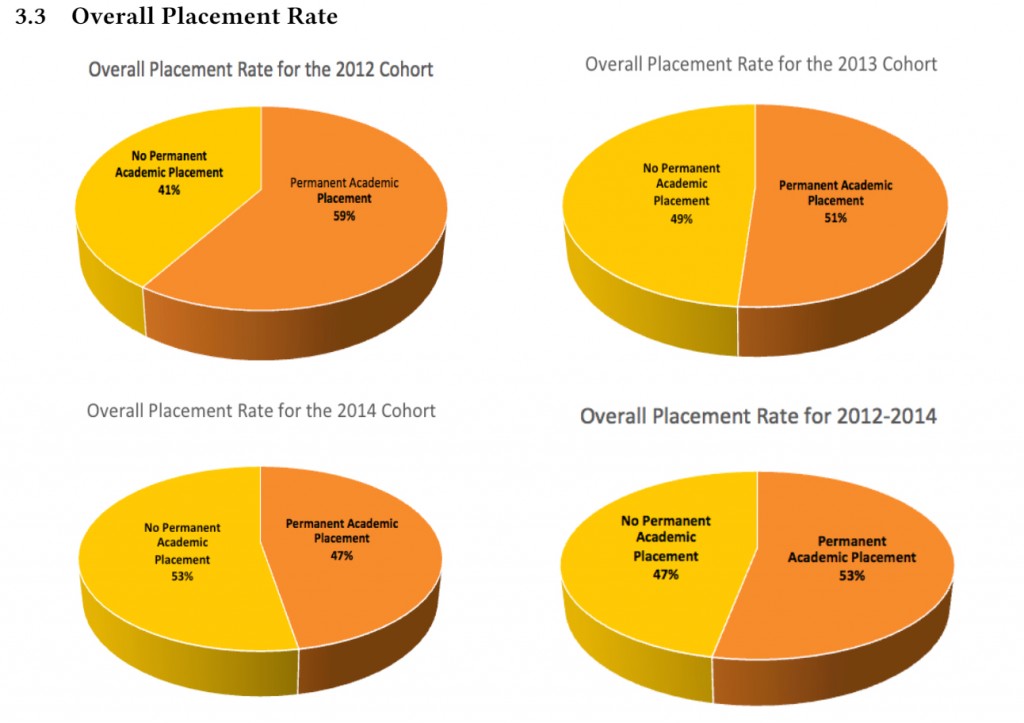

First, over the 2012-2014 period, on average, 53% of job seekers found permanent (tenure-track or similar) academic employment, with the trend worsening over the years:

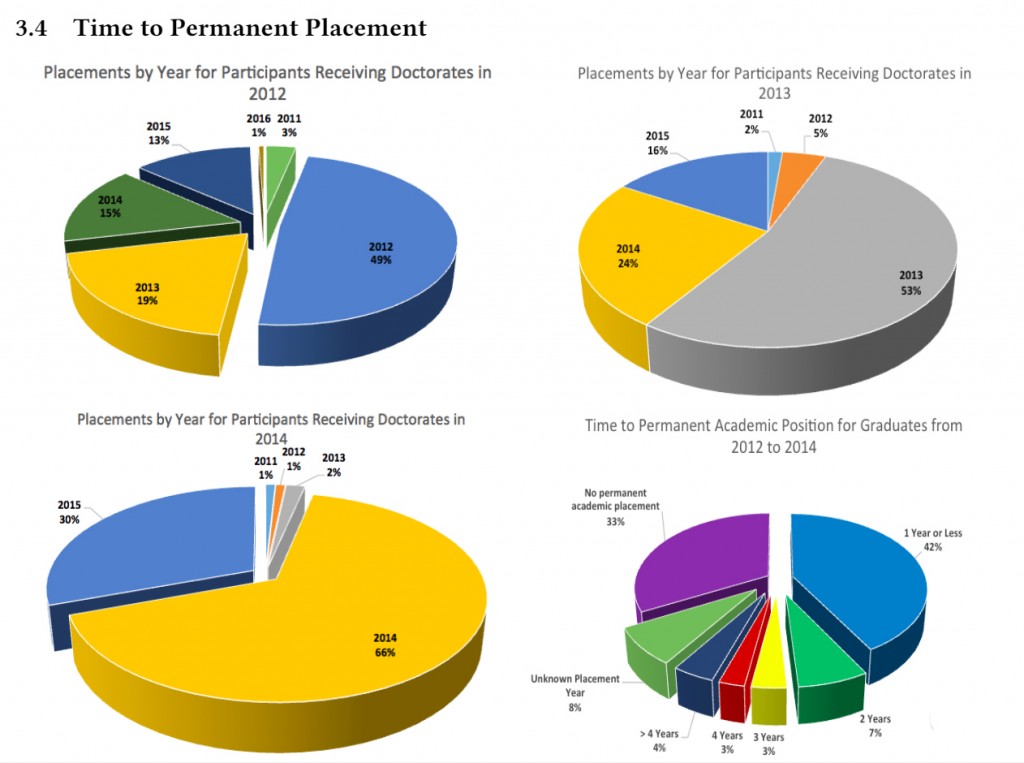

Over the same time period, 42% of job candidates are placed in a permanent position the year they earn their PhD:

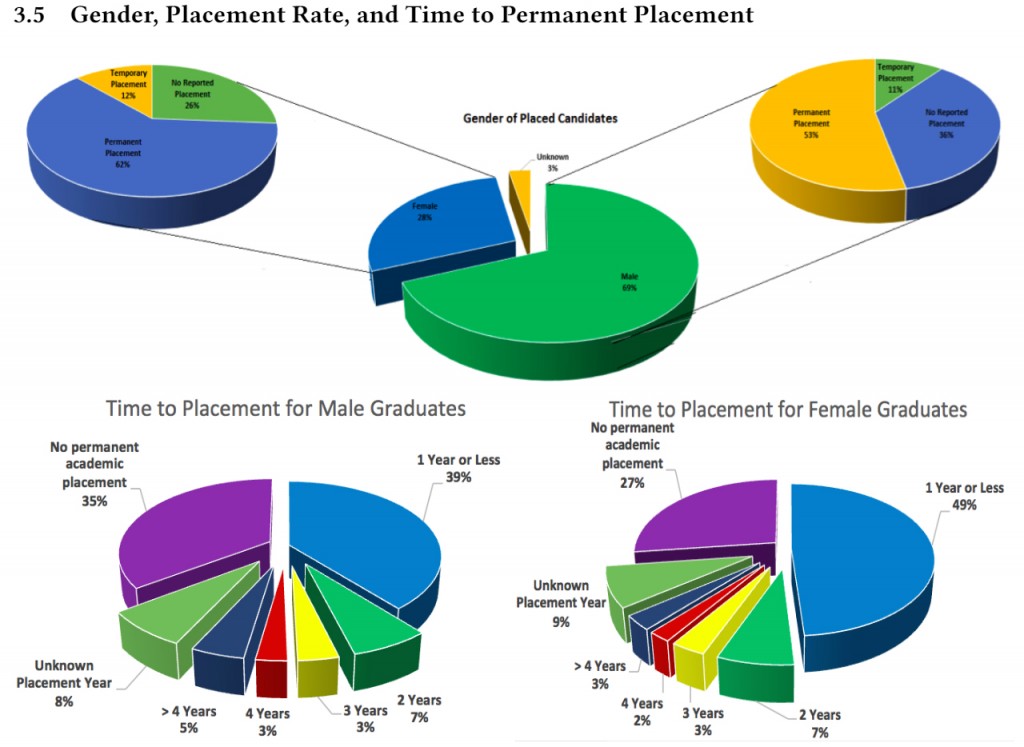

As for gender, 62% of female candidates and 53% of male candidates eventually find permanent positions, with 49% of female candidates getting placed in those positions in their first year on the market, compared to 39% for male candidates. There are roughly two-and-half times* more placed male job candidates than female ones.

Feel free to discuss these findings, or share others from the report you think are worth drawing attention to.

*I had initially written “roughly three times” here. Thanks to David Wallace for the correction.

“There are roughly three times more placed male job candidates than female ones.”

It’s 2.46:1, if I’m reading the data right – so “roughly twice” if you want to round to the nearest integer, though “roughly 2.5 times as many” is probably clearer.

Thanks. I edited the post to make that change.

It seems to me that the interesting news here is not the total numbers (which just reflects genders in the initial pool), but the gendered difference in placement percentages: “62% of female candidates and 53% of male candidates eventually find permanent positions, with 49% of female candidates getting placed in those positions in their first year on the market, compared to 39% for male candidates.” 10% pro female is quite a large effect.

Some of us aren’t quite used to the general gender disparity yet.

First, we should thank Carolyn and everyone who contributed, it’s a really nice project that gives a lot of useful information. I have only skimmed through the report so far, but I have a few remarks/questions. I apologize in advance if the answer to one of my questions is already in the report.

First, in addition to the overall placement rate, it would be useful to have the placement rate for each individual department, as well as the standard deviation. It would help undergrads to choose where to apply and might create more incentive for departments to assist their graduate students in getting a job.

One thing which surprised me is that, according to the result of the regression analysis, having metaphysics and epistemology as your AOS significantly lowers your chance of getting a permanent position. According to this analysis, someone who works in value theory is 28% more likely to get a permanent position, someone who works in history of Western philosophy 30% more likely and, perhaps the most surprising for me (though I would be glad if it were true for purely selfish reasons), someone who works in science, logic and math is 95% more likely to get a permanent position.

There are some people whose AOS was unknown, but I couldn’t find how many in the report, so it would be great if Carolyn could tell us.

According to the results of the regression analysis, being a woman also increases your chances of getting a permanent position by 85%. Based on previous data about publications that we discussed here (http://dailynous.com/2014/12/23/this-year-in-philosophical-intellectual-history/), this is most likely an underestimation, since the analysis doesn’t control for the number of publications. These data suggest that, if there is a leak in the pipeline that explains the underrepresentation of women in the profession, it doesn’t occur in the transition from graduate school to a first academic position. I wonder if we have reliable data about what’s going on at other stages of the pipeline.

In the future, it would be really interesting to include the number of publications in the analysis, although I understand that it would be a lot of work. First of all, the regression analysis could estimate the independent effect of the number of publications on your chances of getting a job, which would be really useful since there is even a debate as to whether having publications helps at all. At least, in my department, not everybody agrees that having publications helps and students get conflicting advices about whether they should try to publish while in graduate school. Moreover, as I noted above in connection to gender, including the number of publications in the analysis would make the estimation of the independent effects of not only gender but also AOS more accurate.

This, of course, assumes that number of publications is a reliable measure of the quality of an applicant. Why not choose some other factor (e.g. average teaching evals, or perhaps general pleasantness/collegiality, on a scale of 1-10? Or departmental service and involvement? etc.). The idea that the only, or even primary, measure of the qualifications of an applicant is his or her number of publications is bizarre.

Presumably we actually have data about publications, and we don’t have the data about teaching evals. And pleasantness/collegiality don’t seem to be part of the requirements to get tenure, and so probably, though desirable, not a part of the job descriptions.

It would be nice for those trying to justify the obvious benefits women have on the job market if it were true that the women hired have some heretofore unknown qualities that justify their being preferred in hiring. But until such qualities are actually offered, and the data on them studied, we have to go on the information we have. And that is that women are massively disproportionately hired based on the number of them that get PhDs, and one potential explanation (they have more publications) is definitely not true. Until there’s some other explanation, the best explanation seems to be the only difference between them and the male candidates–they’re women.

What about my post makes you think that I “am trying to justify the obvious benefits women have on the job market”? “Number of publications” just seems like an extremely crude measure, such that it would be meaningless to “control” for it in the data. In fact, one might think that a high number of publications might be the mark of a particularly bad, but tenacious candidate, who despite his or her failure on the market sticks around year after year, publishing where he or she can.

It appears, on the face of it, that women have a leg up on the market, I’m certainly happy to admit that. I (just) think that PL’s statement that 85% is an “underestimation” because it doesn’t take publication data into account is absurd.

I agree that just counting the number of publications is a very crude measure. For instance, it doesn’t take into account the quality of the publications in question, although this could probably be done relatively easily by taking into account the prestige of the journals in which they are published. But I don’t see how that’s relevant for my argument that, on the assumption that, other things being equal, having more publications increases the likelihood that you will get a job, the analysis in the report most likely underestimates the effect that being a woman has on your chances of getting a job.

Of course, if we just count the number of publications, any independent effect of the number of publications that we find after including that variable in the regression analysis might hide the fact that, for instance, bad publications lower your chances of getting a job even though good publications increases them. But, as long as publications by women aren’t on average better than publications by men, the argument still goes through provided that when the number of publications is included in the analysis, we find that, other things being equal, having more publications makes it more likely that you will get a job. In other words, how crude that measure is really doesn’t matter, as long as it’s not crude in a way that obfuscates systematic differences between publications by men and publications by women that affect their chances of getting a job.

Of course, my argument rests on another assumption, namely that men and women do not also differ systematically with respect to some other variable, one that has nothing to do with publications, in a way that might partly explain why the analysis published in the report found that, other things being equal, women are more likely to get a job than men. But I already noted that and, if you read another comment I posted below (http://dailynous.com/2015/09/01/philosophy-job-placement-data-update/#comment-70002), you will see that I even suggested that the prestige of PhD-granting institution might conceivably be such a variable.

Thanks, I agree with everything you’ve said. I suppose I think the presumption should go in favor of thinking that there is some factor at play here (time on the market would be another obvious one) that explains the discrepancy in # of publications at time of hiring — I mention this in my post directly below. I would think that one would *only* assume that a strong preference for women were the cause here *of the publication difference* if one thought that number of publications were the only other main factor in hiring decisions (I think it clearly is not).

Sorry for the double post: It may also be worth mentioning that number of publications might be correlated strongly with other factors that are tied to gender (AOS, for instance, since early publication may be encouraged or required more in some subfields than others; years on the market might be another, since men tend to spend longer on the market). I don’t know that this is the case; generally, I think that figuring out causes and effects here would certainly go beyond the resources of this data, and would perhaps be something that’s impossible to figure out on the basis of (even very detailed) placement data.

In response to your first point, I didn’t say anything about whether the number of publications was a reliable measure of the quality of an applicant (I guess people can reasonably disagree about that), only that it may well have an independent effect on the chances of an applicant to get a job. Therefore, if you want a more accurate picture of what’s going on, it would be a good thing to include it. Moreover, although it would take a lot of work to include the number of publications in the analysis, it would certainly be a lot easier than with the other factors you mentioned.

In response to your second point, you’re certainly right that the number of publications might be correlated to other factors which are themselves related to gender, such as AOS. But, insofar as those other factors are also included (which, for instance, is already the case for AOS), the regression analysis could help us to get a sense of the *independent* contribution the number of publications makes, i. e. how much having publications increases/decreases your chances of getting a job *all other things being equal*.

I don’t know how else to read this statement, made by you above: “According to the results of the regression analysis, being a woman also increases your chances of getting a permanent position by 85%. Based on previous data about publications that we discussed here (http://dailynous.com/2014/12/23/this-year-in-philosophical-intellectual-history/), this is most likely an underestimation, since the analysis doesn’t control for the number of publications.” I.e., it would only be an “underestimation” if we expected the number of publications to generally correlate with higher odds of getting a job. I see no reason to have this expectation, unless we think that the number of publications is the measure of a quality of a candidate.

“I see no reason to have this expectation, unless we think that the number of publications is the measure of a quality of a candidate.”

Here’s one possible explanation that is neutral on the quality of a candidate: tenure almost always requires publications. So even if two candidates are equal in quality–whatever that might mean–there could be reason to prefer hiring the one with publications. After all, extant publications are better proof that one can do the publishing required for tenure than no publications. One might even think that candidate A is better than candidate B, but that A’s projects are not easily publishable and so s/he is a risk for tenure.

You’re right that, in the argument you quoted, I’m assuming that, all other things being equal, having more publications increases your chances of getting a job. But this could be true even if the number of publications doesn’t reliably measure the quality of an applicant. All it takes for it to be true is that, for whatever reason, having more publications makes it more likely that you will get a job, all other things being equal. Unless by “quality of an applicant” you mean something like “how likely the applicant is to find a job”, in which case we were just talking past each other.

So, if what you were saying is that, in the argument you quoted, I was assuming that, all other things being equal, having more publications makes it more likely that you will get a job, then you were right and I just misunderstood you. But, even if that’s what you meant, you are certainly wrong to think that, in order for my argument to work, the number of publications has to be “the only, or even primary, measure of the qualifications of an applicant”.

The argument in question does not rest on this assumption, but only on the assumption that, all other things being equal, having more publications increases your chances to get a job. This strikes me as a very reasonable assumption, though we should certainly test it by including the number of publications in the analysis, especially since this would not only tell us whether that assumption is true but also give us a sense of the size of the effect.

Rather than include number of publications in the analysis, it might make more sense to include the number of publications *per year on the market* in the analysis.

This is also a reply to babygirl’s last comment above (http://dailynous.com/2015/09/01/philosophy-job-placement-data-update/#comment-70046), it seems that we have exceeded the number of nested comments allowed.

Both of you suggest that, if we take into account the number of years on the market, the difference between men and women in the number of publication will disappear. I doubt that, but in any case, just adding a variable for the number of publications in the analysis will automatically take care of that, since it already controls for the year of graduation.

As for the more general point that babygirl also made, I agree that men and women could systematically differ in ways that explain, at least in part, why men have on average more publications than women. For instance, it could be that women are more likely than men to specialize in areas which — other things being equal — not only make it more likely that they will get a job, but also less likely that — other things being equal — they will have many publications. It could also be that women are more likely to get their PhD from a prestigious institution, and that people who got their PhD from a prestigious institution are not only more likely — other things being equal — to get a job, but also less likely — other things being equal — to have publications.

In fact, I have no doubt that *part* of the difference between men and women in the number of publications can be explained in that way, but according to the data previously collected by Carolyn on the number of publications, the difference is large enough that I would be very surprised if *all* of it could be explained in that way. (One thing to keep in mind, however, is that unlike the data she just published, the data previously collected by Carolyn about the number of publications don’t tell us anything about unsuccessful applicants.)

Now, if I’m right about that, then provided that, other things being equal, having more publications makes it more likely that you will get a job, the analysis in the report, which doesn’t control for the number of publications, likely underestimates the positive effect of being a woman on the odds that you will get a job. This seems like a perfectly good argument to me. To be sure, it rests on a lot of assumptions that haven’t been tested yet, but that’s why I think we should include more variables, such as the number of publications and the prestige of PhD-granting institution, in the regression analysis. This would also be help to address questions that have nothing to do with gender, but that graduate students — both men and women — would find really interesting, such as how much, if at all, publications increase your odds of getting a job.

I suspect that, if you are so intent on rejecting my argument, it’s because you are assuming that, if at least part of the difference in the number of publications between men and women can’t be explained away, then it means that men are on average more qualified applicants than women. Now, I agree that it’s prima facie implausible, and that we should try to explain as much of the difference as we can by controlling for other variables. But, even if, as I think, part of the difference cannot be explained away, it needn’t be the case that men are on average more qualified than women. It could simply be, for instance, that women, or their advisors, know that — other things being equal — being a woman increases their chances of getting a job, so they more often than men choose not to publish while in graduate school and focus on writing a better dissertation.

In any case, I think there is no question that we should refine the analysis by adding more variables, not only because it would help getting a better idea of the role played by gender in hiring, but also because it would give all sorts of useful information to graduate students and departments.

Just one minor comment:

“According to the results of the regression analysis, being a woman also increases your chances of getting a permanent position by 85%”

It’s the odds that are increased by 85%, not the chances. An 85% increase in the odds is much less substantial than an 85% increase in the chances.

That’s absolutely right, thanks for correcting me. The regression analysis in the report estimates ratio of odds, not ratios of probabilities, so the way I expressed myself above is misleading.

It would also be great to add a variable in the regression analysis for the prestige of the PhD-granting institution. I’m guessing that it could be done relatively easily, since presumably you already have all the data and you could use Leiter-rank as a proxy for prestige, while on the other hand it would take a lot of work if you wanted to add the number of publications in the analysis. And, crucially, it would make the estimation of the independent effects of gender and AOS more accurate.

For instance, it could be that part of the benefit that being a woman gives you according to the current analysis comes from the fact that women are overrepresented in prestigious departments, since getting your PhD from such a department probably has an independent, positive effect on your chances. (Not even necessarily because there is a prestige-effect, at least not only, but because people from prestigious departments tend to be better.) It would be a bit surprising to me, but it’s certainly possible.

I also wouldn’t be surprised if, when controling for the prestige of the PhD-granting institution, the penalty for specializing in metaphysics and epistemology was even greater, since my sense is that metaphysics and epistemology is overrepresented among graduate students in the best philosophy departments. But it’s just a vague impression and I don’t actually know that it’s the case.

What would it mean, do you think, if an institution didn’t participate? For instance, I don’t see UCSD as a participating institution. But I don’t take that to mean that UCSD has anything to hide….right?

No, in fact some institutions did participate but were not included because we couldn’t separately identify graduation data. We had two separate pieces of information–placement and graduation. An institution had to have both to be included.

Two quick points–1) It is worthwhile checking out the Evaluation and Outlook section if you, like Philippe, are interested in why we didn’t do program specific analyses. We hope to do these soon. 2) If you read through that section you will see why we probably cannot add publications for some time, due to the scope of that project and what we already have on the table. (But we will be looking for more sources of financial support that might enable this.) You mention some of the problems with just listing the number of publications–we all define what counts as a publication differently, and this is no small matter. One possibility would be to have philosophers rate the desirability of lists of publication venues and years that match actual candidates.

And a note of caution: the gender question is tricky. We currently have no idea what the profile of female and male candidates is like on the market. Noting the 1:2.5 split is key to remember that many women have dropped out of philosophy, and we still don’t know why. In fact, I noticed that the proportion of women in the placement data (28% in this subset) is smaller than I would have expected, given other data on women graduates (31% in 2011 according to one source). The self-selection effect for women has been noted in the sciences (http://www.statcan.gc.ca/pub/75-006-x/2013001/article/11874-eng.htm): “Although mathematical ability plays a role, it does not explain gender differences in STEM choices. Young women with a high level of mathematical ability are significantly less likely to enter STEM fields than young men, even young men with a lower level of mathematical ability.” There may be self-selection in philosophy that is tracking philosophical ability–equally capable women drop out of the field, whereas particularly capable women stay in the field. That is one possibility. (Such gendered self-selection may have even been operative at each stage from the 50/50 split in undergrad up to the 70/30 split in grad school.) It may be that confidence is operative–there is some work on women in sport (http://www.sciencedirect.com/science/article/pii/S0165176508000888) showing that women tend to be confident in male dominant sports environments and that this confidence pays off: “Our results show that confidence does pay off in terms of performance. On average, overconfident runners improve their times by 2.27 min (or by 4.2%) more than the other runners (see Columns 1 and 3 of Table 3).” I would love to see more work on this, including the publication measure, and I think that would help us to answer some of these questions about gender. But the work of APDA to generate an overall view is really just the start of that picture.

Hey Carolyn, thanks for your response, and sorry I didn’t read carefully the Evaluation and Outlook section, which answers at least one of my questions. I understand that including the number of publications would take a *lot* of work, but it seems to me that you could include the prestige of PhD-granting institution relatively easily, so I hope you will do that eventually.

The hypothesis you mention to the effect that women might disproportionately choose not to apply for jobs in philosophy after they graduate is also interesting and, although I doubt it can explain much of the results you reported in your analysis, it could certainly explain part of it so it would certainly be interesting to see more data about that.

I also hope that you can eventually get around to include the number of publications in the analysis, though I understand the difficulty of doing so. It would not only make the estimation of the independent effects of AOS and gender more accurate, but it would also be really helpful for students who are wondering how much, if at all, they should try to publish while in graduate school.

Unless you have well over a thousand data points, a 28% -vs- 31% gap is probably not statistically significant. (Albeit a lot of these “not-statistically-significant” M/F gaps tend to point in the same direction, which can be significant in of itself.)

Fair point. I checked to make sure. I looked at only the programs for which we had graduation data from Review of Metaphysics and complete placement data (since we only have gender information on graduates from Review of Metaphysics, the other graduation numbers are for overall graduates) and found that in this group 28.4% of the graduates were women, whereas 25.9% of the placements were women, but the difference was not significant (p=.53, Chi-squared test). In doing this we discovered an error in one of our charts and some potentially confusing language in the document, so we have updated the report.

A couple of things:

1. Since the conversation is almost certainly going to be focused on the gender disparity, I might as well point out that, yes, as someone who frequently gets accused in these sorts of blogs of being some kind of Evil Feminist type, etc., the data show that women are doing better on the philosophy job market than men. It’s always possible that a full review of the data would overturn that, but I think that’s unlikely.

2. Okay, so why? It seems to me that there are a lot of possibilities, and I want to lay out as many as I can that I think have a non-trivial change of being a contributing factor. The order does not imply a ranking of their likelihood:

a. The women who earn philosophy PhDs are more likely than men to do their work in subfields of philosophy that are popular with hiring departments.

b. More women drop out of philosophy PhD programs than me (for a variety of reasons), and so the women who graduate are on average stronger than the men in terms of the virtues typically required (persistence, et al.)

c. Men are more likely than women to focus their resume/CV building activities on things that are less important for getting hired (e.g., more publications above a small few) than things that are more important for getting hired (e.g., gaining teaching experience

d. The women who do philosophy PhDs are more likely to be white and from a privileged undergraduate institution (i.e., elite SLACs) than the men who do philosophy PhDs, and undergraduate institution is a major factor in getting hired.

e. The women who do philosophy PhDs are more likely to have a PhD from an elite institution than the men who do philosophy PhDs, because the elite institutions do a better job of recruiting women graduate students.

f. Many hiring departments are correcting for underrepresentation of women by interviewing more women, and thus those women are benefiting from having a better stage from which to show their accomplishments/merits as candidates.

Here are some possibilities that I think are highly unlikely:

g. Women are doing systematically better philosophical work than men. (I’m assuming there’s no gender difference here, and I certainly have never noticed any such difference.)

h. Departments are acting on direct mandates from university administration that they must hire women.

My guess? Some combination of several things from a to f. But we need more data, no?

One can’t avoid noticing that all of your hypotheses as to why women on the job market are less qualified on average are consistent with the assumption that this group is on average equally talented and equally driven as the men.

But why assume this? Why not assume that the qualifications we see track the underlying talent and perseverance of the two groups? Stepping away from any political considerations, isn’t that the simplest and most plausible explanation?

And to say that this group of women is not as talented and/or driven as the group of men is not to make a more general claim about the level of talent and drive in women vs. men. It may very reasonably be thought that, for whatever reason, women with high levels of talent and drive choose other professions. Certainly women have come into the medical and life sciences professions in high numbers, and have come to dominate a number of other professions, such as psychology and social work.

Why might less talented/driven women stick to philosophy as compared to men? Perhaps it is an unintended effect of the very efforts to encourage women to pursue philosophy. They are told that the usual signs that a student might take that they are struggling, such as the inability to participate fully in a seminar or in a philosophical bull session, is actually fully attributable to stereotype threat or some other impact of sexism. At every stage, they get extra encouragement that the men don’t get, leading the weaker students among them to persist when like males would be far more likely to drop out.

In any case, if we really want to get at the true explanation of the data we see, we should entertain all real possibilities.

“women on the job market are less qualified on average”

Where are you getting this?

Well, I guess I see this as being a pretty inescapable consequence of two points (going back to data from previous years):

1) women who are hired are distinctly less qualified, in the sense of being published (60% men vs 46% women), and especially in the sense of being publishing in prestige journals (27% men vs 11% women, as I recollect), than men.

2) women receive job offers in pretty close proportion to their representation at the end of grad school. (each close to 30%, plus or minus a few points).

I don’t see how it’s possible to make those numbers square unless women coming into the job market (or at least those who have reached the end of grad school, presumably pretty much exactly the same group, at least relative to men) are on average less qualified than the men. Because the differences cited in 1) are pretty dramatic, and the differences cited in 2) appear to be pretty small, I don’t see any real room for another conclusion. Under any reasonable set of assumptions, that would be the consequence.

Now I guess one might argue that, even if women on the job market are less qualified (i.e., less published) than men, that may be because women are hired earlier in their careers, and they may ultimately be as well published, and well qualified, as men. There is of course no direct evidence for this claim. I guess I’d find it pretty surprising to find in particular that the major gap in publishing in prestige journals might be erased, comparing like to like. And another speculation is that it’s actually rarer and harder to get things published in the areas in which women have a higher representation. But this argument is particularly hard to credit. Women have a higher presence in moral philosophy, history of philosophy, and feminist philosophy. Is there any plausible reason to believe that in these areas, particularly, it is rarer and harder to get something published?

I don’t think it is quite right to move from the reported differences in publication rate to the conclusion that women are less qualified on average. For one, I was the one who collected the publication data. I know that my process of counting could have itself been influenced by biases. I did not count everything (e.g. book reviews, commentaries, invited chapters), and I had something of a system, but I was looking at documents with names while deciding what counted, and that method has some obvious setbacks. The reason I am not including that data now is that I am not satisfied with the process I used. Everything that we are doing now with APDA, when we assign an AOS category, for example, is as systematic and name-free as possible, to protect against these sorts of things. But let’s imagine that my system of collecting publication data was better than it was, and not open to the effects of gender bias–would fewer mean publications (but equivalent median publications) necessarily point to women being less qualified on average? I think the answer is no. That biases in the publication process keep women from publishing equally good work at the same rate as men is already something that our discipline and many other disciplines are taking seriously. This means that you would expect women who are equally qualified to have fewer publications than men. Further, the mean publication number is primarily set by men at the extremes, with 5+ peer-reviewed publications (http://www.newappsblog.com/2014/12/gender-and-publications.html). Most men and women have only 1 publication. What can we say about these extreme cases? Are they clearly more qualified candidates? Are they 5 times as good as most candidates? Again, I think the answer is no. So better than number of publications for answering this sort of question would be publication profile (rated, say, 1-7 by participants who have a professional profile similar to that of a search committee). I would eventually like to see what sort of publication profiles women and men have on average, and whether there is a significant difference there, but we are far from that. For these reasons and others, I really don’t think the evidence is there for your claim and I think it is hasty to make it.

I agree with Carolyn that Lexington is a little bit too quick in drawing conclusions above. But I want to point that, as I noted back when the data she previously collected were discussed on this blog, even when people who have more than 5 publications are ignored, there still remains a significant difference between men and women in the number of publications. Moreover, Carolyn talks about the bias against women in the publication process as if it was a demonstrated fact, but as far as I know — please tell me if I’m wrong — the existence of such a bias in philosophy is still hypothetical. And, insofar as there seems to be a preference for women in hiring (at least, given the data we now have, the existence of such a preference seems more likely than not), we have at least some reason to be skeptical about the existence of a bias against women in the publication process.

Re h. I was on a hiring committee after a retirement where the administration told us, “You can keep this line if and only if you hire a woman to fill it. We will not approve it otherwise.” So we did.

Re f or h. A close friend works at a school that hired what she told me was a woman-only job. They interviewed 8 women and 2 men, flew out 3 women, and hired a woman who had not finished the PhD, never taught, and had 0 publications.

That’s some data. I suspect this is more common than people realize.

Regarding “h,” what you’re reporting is a common way I’ve seen it reported: a few stories here and there about departments being told they must hire a woman. I even know of one case myself where I’m pretty sure it happened. And so, sure, it probably happens in a few places. But I don’t see it as an explanatory factor for the gender disparities found in the data CDJ has posted. My contention is that I don’t think it happens particularly often, and the few times it happens are probably matched/canceled out by the times sexism leads to the hiring of a man over a woman. I could be wrong, but I’d need to see some evidence to believe I’m wrong.

First, I would like to point out that, among the hypotheses you suggested, we can probably already rule out (a). Indeed, the report that was just published shows that, even when you control for AOS, being a woman still increases the odds that you will get a job. To be sure, the categories used for AOS are somewhat crude, so it’s possible that a more fine-grained analysis might show something different, but honestly at this point it seems rather implausible.

As for the other hypotheses you suggest, (b), (d) and (e) would be relatively easy to test, though I would be very surprised if they affected the results very much. All the same, I agree that we should try and test them, if only to rule them out.

On the other hand, I’m very skeptical of (c). The only merit of this kind of hypothesis, it seems to me, is that it might explain the data without having to assume that, other things being equal, one is more likely to get a job if more is a woman. It has no independent plausibility and, perhaps more importantly, it’s hard to see how we could ever test it. So, if you insist that, unless we have ruled out this hypothesis and every other that has no independent plausibility but could explain the data, we cannot conclude that there is probably a preference for women, then it seems to me that you are in effect saying that, no matter the amount of evidence we have, we shall never be in a position to draw that conclusion.

I don’t really see how this is different from a radical sort of inductive skepticism that, outside of the philosophy classroom, nobody really takes seriously. (This isn’t meant to be dismissive of philosophy, since I think it’s a really interesting question why this kind of skepticism is unwarranted, but it doesn’t change the fact that in practice we don’t take it seriously.) I seriously doubt that we’d see this kind of skepticism if the data didn’t suggest that women are, other things equal, more likely to get a job.

In particular, I very much doubt that, if the data had shown that *men* were statistically more likely than women to get a job, people would have entertained hypotheses like (c). And it’s certainly not because we have a reason to think that (c) might be true, but no reason to think that (c*) — the same hypothesis where the role of men and women are reversed — might be true. In fact, as far as I can tell, we have no reason to think that either (c) or (c*) might be true. As David Wallace already noted, when discussing those data, that’s something we should keep in mind.

Thanks for sharing this very interesting data! It’s certainly very helpful for current and prospective grad students. I just have two comments about the statistical methods used:

1) Is it possible to report the results in terms of margins/marginal effects, rather than in terms of odds? Because changes in log odds are very difficult to interpret, even for people familiar with logistic regressions. One way to compute marginal effects is to hold all other variables at their sample averages, and change one variable (say, gender). This will allow you to calculate the difference in probability of placement between an “average” male candidate and an “average” female candidate. (There are also other ways to calculate marginal effects. For instance, for each candidate, we could ignore the true gender of each candidate, and use the model the calculate the difference in probability of placement if they were male vs if they female. After repeating this for every candidate, we can average out the differences obtained, giving an average marginal effect, albeit using more data than in the first method)

2) Not sure if you tried this, but you could also try interaction terms – such as (female*value theory) – which test the hypothesis that the relationship between gender and placement is different for each of the AOSs. (When you include publications, you can also use interaction terms to see if the relationship between publication and placement changes by AOS)

Thanks again for your efforts, I look forward to seeing more results!

These are great suggestions. Regarding interpretation of results we will do our best to make future updates more accessible to all readers. Additionally, we hope to be able to investigate many of these questions in future updates, data permitting (e.g., power).

Sure thing, and thanks again for your efforts! Yeah I can definitely see power/data being an issue, given that there really aren’t that many data points (and especially so given the issues with data reporting). I should also say that there are some R packages to help compute marginal effects – the ‘mfx’ package (https://cran.r-project.org/web/packages/mfx/mfx.pdf) and the ‘erer’ package (second part of the article here: http://www.r-bloggers.com/probitlogit-marginal-effects-in-r/). Thanks and hope to hear more results from you guys, cheers!

Another possibility is that when recruiting graduate students, departments systematically over-estimate the potential of male students, and for that reason, admit comparatively more weak male students than female ones.

(I suppose this would be a version of g), since it would mean that female graduate students were likely to be doing systematically better work than male graduate students; but so understood, I’m not sure why we should dismiss it out of hand: I guess what the poster intended to dismiss out of hand was the suggestion that women were simply better at philosophy than men, and doing better work for that reason.)

This is a good point. But I think (g) gets pretty complicated. For one, even systematic bias at the admissions stage might end up getting smoothed over by the seemingly random ways in which graduate students develop. I’m sure we all know plenty of people with seemingly high potential who dropped out of the program, and people with seemingly low potential who did really well. I’m not sure whether the judgments made by admissions committees track much of anything. And a second point is that I’m not sure that even if women were doing systematically better philosophical work than men, it would matter all that much to hiring outcomes. And so even if true, it might not explain anything. I think one could make a decent case that at this point, there are far more job candidates doing acceptable levels of philosophical work than there are job opportunities. We do sometimes forget, I think, that for many departments doing hiring, the publication requirements for tenure are below what the average job seeker would be able to produce. It’s not all that unusual to hear about job candidates who have already met the publication requirements for tenure at most institutions when they hit the job market. (This is part of why I’ve frequently made the semi-serious suggestion, pretty unpopular to just about everyone in history, that once departments weed out the obviously unqualified candidates they should consider hiring by lottery.)

“I think one could make a decent case that at this point, there are far more job candidates doing acceptable levels of philosophical work than there are job opportunities.”

But:

(i) it’s not clear what “acceptable” means except “competitive for hiring”. If so, then the steeper the job market, the higher the bar for “acceptable”.

(ii) Even granted a non-circular sense of “acceptable”, I think you need something stronger: that, among the candidates doing acceptable work, it’s not possible to make any robust comparisons of quality of work. That doesn’t sound plausible. (Its implausibility is compatible with those comparisons not *always* being makeable.)

We’d probably have a pretty tough time coming up with what “acceptable” amounts to. But, yes, “competitive for hiring” is a close approximation. But I don’t think it follows that the steeper the job market, the higher the bar for “acceptable.” That would only follow if published philosophical work were the only or primary thing hiring committees are looking for, and I don’t think that’s what’s going on with most hiring committees. My best guess is that most departments have a certain expectation (maybe 1 or 2 published articles and/or an interesting sound project and a good story to tell about how it leads to publications), and that more and more candidates are meeting that expectation.

Let me add to Matt Drabek’s list

i. Gender sometimes breaks ties, i.e. when a hiring committee has two basically-equivalent-quality candidates, it might choose a woman over a man so as to address overall underrepresentation.

“Gender can break ties” is legal in the UK under the 2010 Equality Act (I don’t know about the US) and seems to me (again, in the UK) to be broadly reasonable.

As a sanity check for interpreting the gender data, I think it’s interesting to ask what the reaction would have been if it had turned out that men were statistically *more* likely to get jobs than women. I *strongly* suspect that “appointment panels somewhat prefer men, ceteris paribus” would have been taken very seriously indeed as a possible reason. That being the case, I’d be nervous if “appointment panels somewhat prefer women, ceteris paribus” wasn’t taken seriously here.

(This shouldn’t be construed as an accusation of injustice. As it happens, I’m in favour of appointment panels slightly preferring women ceteris paribus; cf my earlier comment.)

Yes, I think this is all correct. But generating potential interpretations would have to be done against a background of what we know and reasonably believe about the profession and about society. Thinking back to the original list I generated, (a) wouldn’t make any sense if the data were to show that men are more likely to get jobs than women. The reason is that we know (or at least very reasonably believe) that men are not more likely to do work in areas that have seen recent spikes in job ads. (B) wouldn’t make a lot of sense either, since it’s very unlikely that men drop out of PhD programs at higher rates than women. I suppose (c) and (d) would remain as empirical possibilities, though unlikely ones. By the time we’re done culling our other explanatory possibilities, “appointment panels somewhat prefer men, ceteris paribus” is probably the strongest contender remaining. That’s not so in the case of the data showing women more likely to get jobs.

Philippe Lemoine–Because I am at an airport waiting for a flight, I played around with the data a little. I agree there is still a difference in the means for women and men (for those years, for that data) if we exclude those with 5+ publications. Did you find it to be significant? (For me it wasn’t.) If you still have the original spreadsheet, you can check this, but I did a small exercise. I looked at women and men with matching profiles from this data who were placed in tenure-track positions with no publications. By matching profiles I mean that they are in the same AOS category and from the same philosophy program. (I should have looked at graduation year, but didn’t.) I looked up what they have published now, according to their most recent c.v. or PhilPapers. I wasn’t able to find many of these–11 women, 11 men. (In some cases there were 2 men to 1 woman, or vice versa, and then I just went with the first that came up on the list as a match.) Of course, my counting could be sketchy for the reasons mentioned above, but I only excluded a foreign-language publication, counted dual-author publications as half, and didn’t count book reviews. Here are the two publication profiles, one per gender. Profile A: 19 publications (including PPR, European Journal of Philosophy, British Journal for the Philosophy of Science, Philosophers Imprint, Philosophy Compass, Philosophy of Science, British Journal for the Philosophy of Science, and Synthese). Profile B: 18 publications (including PPR, Oxford Studies in Ancient Philosophy, Philosophy of Science, Ethics, Ancient Philosophy, Journal of the History of Philosophy, and Episteme). I don’t see a clear difference between these profiles. Granted, it is a small sample.

I found that, if we ignore people who have more than 5 publications, the mean number of publications is 1.288 for men and 0.937 for women. Using a two-tailed Student’s t-test with unequal variance, I find a p-value of 0.006, which is highly significant. If I exclude not only people with more than 5 publications, but also those with 5 publications (as you seem to have done), the mean number of publications is 1.175 for men and 0.85 for women. The p-value is 0.004, which is again highly significant. Since I did that pretty quickly, I may have made a mistake, so I encourage you to check my results: https://dl.dropboxusercontent.com/u/110113461/PlacementData%20-%20Revised.xls.

As for the little experiment you just performed, while I think it may prove interesting to do that on a larger scale, I don’t think it can tell us much since, as you noted yourself, the sample is very small. Moreover, even if we ignore that problem and the fact that we’d have to choose people who graduated the same year, you only compared one man and one woman in your sample. But, if we really want to know whether men and women who were hired with no publication went on to publish equally well, we need to rate the publication profile of everyone in the sample and check whether there is a significant difference between the mean rating of men and that of women.

I see–I only looked at tenure-track jobs. For that, excluding those with 5 and above publications, I got p>.05 for 2-tailed, equal variance. Yeah, I agree that it would be better to have a larger sample. A future project, perhaps!

When I only consider people who got a tenure-track job with less than 5 publications, I found that men had a mean number of publications of 1.195 and women of 0.892. A two-tailed Student’s t-test with unequal variance gives a p-value of 0.038. I suspect that, in this case, it’s a mistake to use the version of the test that assumes equal variance and, therefore, uses a pooled variance estimate to calculate the statistic. But, even when I use that version of the test, I find a p-value of 0.047. This result is at odds with yours, but since I excluded people with 5 or more publications “manually”, I wouldn’t be surprised if I made a few mistakes. However, I would be surprised if I made enough mistakes to change the result significantly, so I doubt that you found a p-value much above 0.05. (I updated the file on Dropbox so you can check my results.)

Since the critical value of 0.05 is arbitrary and the difference between the mean number of publications for men and that for women is highly significant as soon as we relax just a little bit the restrictions you put on the analysis, I think it wouldn’t be very reasonable to deny that, according to the data you collected, men who got a job had on average more publications than women and that it wasn’t a statistical fluke. A more interesting question, in my opinion, is how big is the difference and how much, if at all (I think it probably would), including the number of publications in the analysis would affect the ratios of odds calculated in the report.

As for the experiment that you performed, the problem is not only that your sample is very small, but also that, as I noted above, you only compared one man and one woman in that sample. I agree that, based on what you said, they have a very similar publication profile. But what about the other people in the sample? Was it the case that, at least in that small sample, there was no difference on average between men and women. Finally, even if that was the case and if the sample was large enough, it wouldn’t tell us much since you chose people who had the same number of publications when they were hired. I’m not saying that it couldn’t be interesting to do that, but I think that it’s more urgent to include the number of publications in the analysis.

I compared 11 men to 11 women.

(That was the total number of publications for each set of 11.)

Oh, I see now, it makes a lot more sense. I just though that you had found 2 freaks who published like crazy 😀 But, in any case, my other points remain and they are the most important.

That being said, I think it could be interesting to look at the publications of people not only when they were hired, but also after that. It’s not particularly interesting to compare the publications down the line of people who had the same number/quality of publications when they were hired, but it might be interesting to compare the publications down the line of people who did *not* have the same number/quality of publications when they were hired.

So, if you’re going to rate publication profiles and use that to include publications in the analysis, I find it would be interesting to rate not just one but two publication profiles for each individual, i. e. one for their publications at the time they were hired and one for their publications today. If you find that, when the rate of the second profile is used in the regression analysis, the independent effect of gender disappears or is reduced, it could mean that hiring committees are picking up on a systematic difference between men and women at the time of hiring that we can’t see with the data we have.

You have lots of good ideas, and I would like to come back to them when we potentially integrate this type of data into the project. (But note that this may be years in the future.) If it seems as though I have forgotten one or more of these points at a later date, please do bring them up with me again.

Sure, will do! And thanks again to you and your collaborators for doing that. Again, I understand that including publications in the analysis will be a lot of work so I don’t expect this to happen overnight, but I hope that at least you will include the prestige of PhD-granting institution soon, given that it should be relatively quick to do. Are you going to release the data so that everyone can do their own analysis?

We will only release full data to those with IRB approval for research, but at some point we hope to make it easier to access the public data by making, e.g., a public data csv link. Everything public is available through the home page, which we will be updating soon to make more user friendly.

It would be great if you could publish a csv file that contains data about success on the market, PhD-granting institution, year of graduation, AOS (metaphysics and epistemology, value theory, history and logic, science and mathematics) and gender. You don’t have to publish data that include people’s names, since we don’t need that for statistical analysis. But if we have a csv file with the information described above, everyone could do their own analysis. For instance, we could add a variable for the prestige of PhD-granting institution in the regression analysis, to see how it affects the coefficients that were published in the report. Even if we found that the prestige of your PhD-granting institution had a significant, independent effect on your odds of getting a job, it wouldn’t show that it’s a mere halo effect, since it seems plausible that people who got their PhD from a prestigious institution also had better letters of recommendation, writing samples, job talks, etc. But it would make the coefficients of the other variables such as gender and AOS more accurate, so it would be worth it.

I guess what I want to know is whether one would need IRB approval to get the data I was describing above. I didn’t even know what IRB approval is until I checked just now and it looks as though it would be a huge pain to get it. I don’t think anyone will bother to analyze the data you collected if they need to go through such a process before they can even start to work on them.

The only category of collected data that we are not including in the public set is “name.” (I am not sure what to do yet about the non-collected data, such as gender. There are complicated issues here and I will have to consult with the rest of the team on this. But as you know, I favor transparency and openness.) The steps to IRB approval should be research ethics training (usually free and through CITI, taking around 5-6 hours to complete) and the submission of a form describing the research and how it avoids potential ethics violations. Most universities require IRB approval for research, and most journals would not accept a paper unless the research had prior IRB approval. It is a basic and important step in research that forces researchers to think about the impact of their work on others. This data set is a gray area in terms of research ethics. I decided to be more conservative with it for two connected reasons: 1) although the data qualifies as public, compiling it in one place adds a layer of publicity to it that it wouldn’t have had otherwise and 2) there is the potential for harm to placed individuals in having one’s placement record easily compared to others. So-called “big data” is bringing issues like these to the forefront, and it may be that in the end I am being overly cautious, but my current sense is that it is unnecessary to publicly release names so long as we release unique identifiers in their place and that this approach allows for an extra layer of privacy that might make up for the extra layer of publicity we are bringing to the data. I also like that it focuses the attention of those looking at the data onto the comparable categories, rather than individuals.

Sorry, that’s not right–we are also not including email in the public set.

Note that given that 10% more women than men get placements in their first year, and assuming that a significant proportion of the men who miss out subsequently apply for jobs in future years, it follows that the ratio of males to females in the job-applicant pool is higher than the ratio of males to female graduates in a given year. This means that the chances of a male in the applicant pool getting a job are even lower than that suggested by the figures above.

Here is another possible reason for the higher percentage of women being hired. Imagine institutions hiring for 3 positions – whether all in one go or over a period of years. With 70/30 ratio of men to women graduates, other things being equal, one would expect 34% of institutions to hire 3 men. Hiring for 4 positions, one would expect 24% of institutions to hire 4 men. But how many would? In the climate which has been created, they would think, ‘It will look really sexist if we hire three/four men, we better hire at least one woman.’ Or the administration would insist on hiring at least one woman.

This is sex discrimination of course. As is the very common view, advocated above by David Wallace, of being ‘in favour of appointment panels slightly preferring women ceteris paribus.’

This is based on a misunderstanding, I think, of our report, which is due to a lack of clarity on our side. In these analyses we were not able to compare graduation information to placement information for gender, since most of the graduation information we have does not include gender information. So the numbers above reflect only those people who are in our database (nearly all of whom found some sort of placement). That is, of the placed candidates in our database, women had a higher percentage of placements reported in the first year post-graduation. We are planning to release updated analyses towards the end of the year, and we have updated the language in the report to make this clearer. One of the things that may have led you and others astray is that a graph on gender (above) says “no reported placements,” where it should not have included this category. (The “no reported placements” wedge actually reflects all temporary placements other than postdocs/fellowships, which we mistakenly interpreted as “no reported placements” for that graph.)

Thank you for your reply, Carolyn, and for your hard work in producing the report. I will take another look.

I would be grateful if you would let us know whether you are planning on collecting publication data in the near future. You say above that you were concerned that ‘I know that my process of counting could have itself been influenced by biases.’ I am not clear what you mean. Presumably you don’t count, ‘1,2,3, 5’ because of biases. And you can decide in advance what you are going to count – for instance refereed journal articles only, as you suggest. So I cannot see how biases could be a serious problem.

PS If you were worried seriously about biases then it would be a simple matter to anonymise publication records before counting them. An assistant could simply copy and paste each one into a file and give it an ID number. You then count the publications and assign it to the ID number and then subsequently you or the assistant match up the ID number to the person or simply to the sex of the person.

Many people are concentrating on the sex differences, with females seeming to do better on the job market. Given that every job says ‘we strongly encourage women to apple,’ I don’t find this disparity particularly strange.

I’m more interested in the fact that for both women and men the placement percentages are much higher than I would expect.

Of the people I’ve met over the years, only 1 of them obtained a permanent job. I personally have struggled a lot of the job market, despite having a good publishing record. I suspect that these percentages are inflated. Sampling bias due to the 50% drop out rate may be partly to blame.

Could we please talk about this some?

APDA should have new numbers in the next few months. We are currently hand checking the data, which takes a long time (and funding for the students only covered the summer months). The new numbers won’t take care of the issue of graduation rates, but will hopefully give all of us a better idea of what happens after most people graduate. (Also note that the analyses currently only capture those in our database with placements, and so not graduates who were not placed or not reported.)

Hello,

Thanks got getting back to me. If the data only includes people with placements that would, of course, seriously inflate the numbers.

Another interesting thing is it seems your chances of getting a permanent job drop off quickly after the first year. So, it seems this data shows that people shouldn’t stick around for years looking for a permanent job. We’ve all heard the success stories, but I imagine there are way more failures.