Rejection Rates Should Not Be a Measure of Journal Quality (guest post)

“If philosophy relies too heavily on rejection rates as a measure for journal quality or prestige, we run the risk of further degrading the quality of peer review.”

In the following post, Toby Handfield, Professor of Philosophy at Monash University, and Kevin Zollman, Professor of Philosophy and Social and Decision Sciences at Carnegie Mellon University, explain why they believe the common practice of using journal rejection rates as a proxy for journal quality is bad.

This is the second in a series of weekly guest posts by different authors at Daily Nous this summer.

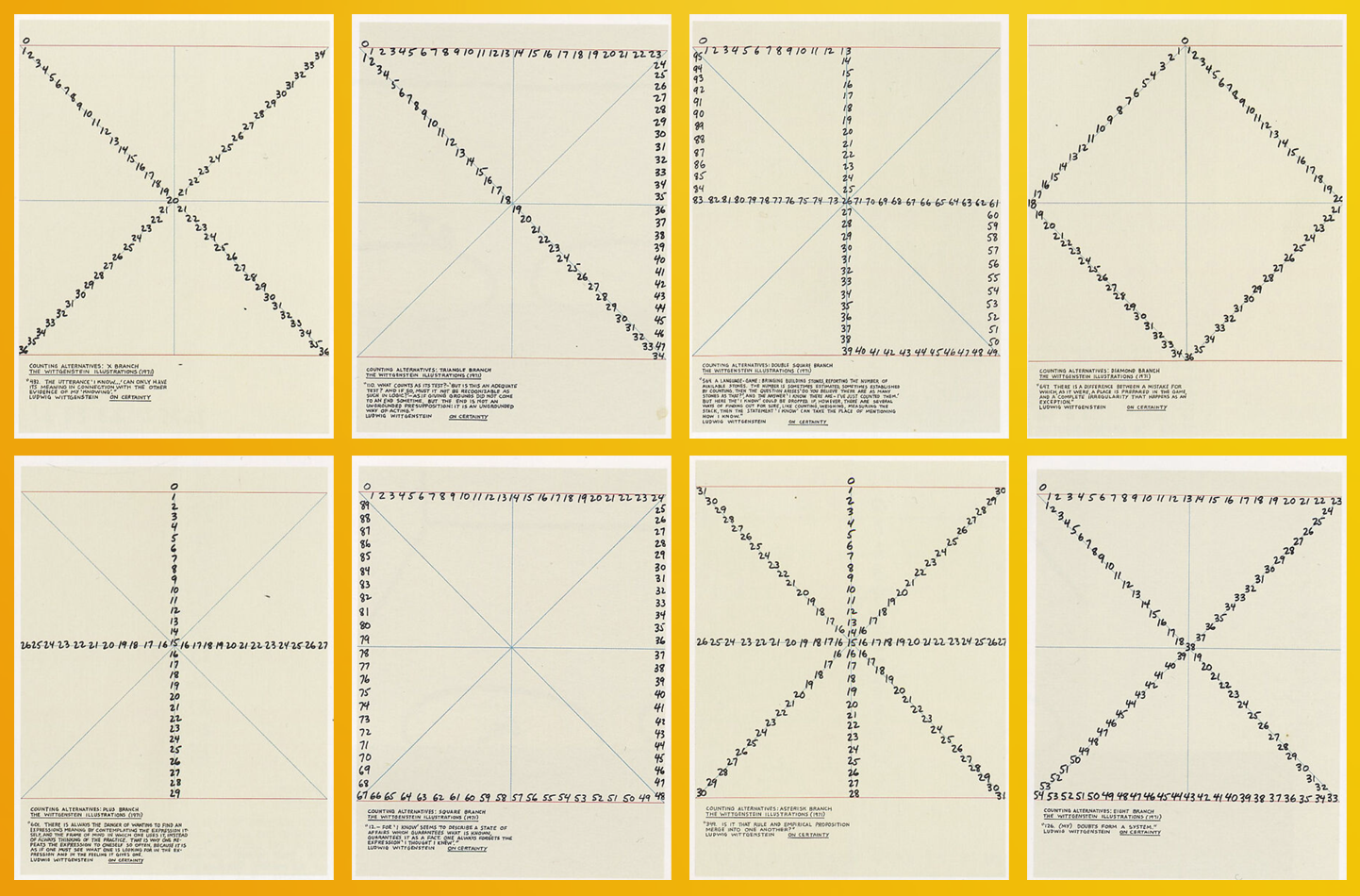

[Mel Bochner, “Counting Alternatives: The Wittgenstein Illustrations” (selections)]

Rejection Rates Should Not Be a Measure of Journal Quality

by Toby Handfield and Kevin Zollman

Ask any philosopher about the state of publishing in academic philosophy and they will complain. Near the top of the list will be the quality of reviews (they’re poor) and rejection rates (they’re high). Indeed, philosophy does have extremely high rejection rates relative to other fields. It’s extremely hard to understand why we have such high rejection rates. Perhaps there is simply more low-quality work in philosophy than other fields. Or, perhaps, rejection rates are themselves something that philosophy journals strive to maintain. Many journals strive to publish only the very best work within their purview, and perhaps they use their rejection rates to show themselves that they are succeeding.

Like many fields, philosophy also has an implicit hierarchy of journals. Of course, people disagree at the margins, but there seems to be widespread agreement among anglophone philosophers (at least) about what counts as a top 5 or top 10 journal. Looking at some (noisy) data about rejection rates, it does appear that the most highly regarded journals have high rejection rates. So, while we complain about rejection rates, we also seem to—directly or indirectly—reward journals that reject often.

It is quite natural to use rejection rates as a kind of proxy for the quality of the journal, especially in a field like philosophy where other qualitative and quantitative measures of quality are somewhat unreliable. We think it is quite common for philosophers to use the rejection rates of journals as a proxy for paper quality when thinking about hiring, promotion, and tenure. It’s impressive when a graduate student has published in The Philosophical Review, in large part because The Philosophical Review rejects so many papers. Rejection rates featured prominently—among many other things—in the recent controversy surrounding the Journal of Political Philosophy.

We, along with co-author Julian García, argue that this might be a dangerous mistake. (This paper is forthcoming in Philosophy of Science—a journal that, we feel obligated to point out, has a high rejection rate.) Our basic argument is that as journals become implicitly or explicitly judged by their rejection rates, the quality of peer review will go down, thus making journals worse. We do so by using a formal model, but the basic idea is not hard to understand.

We start by asking a very basic question: what is it that a journal is striving to achieve? We consider two alternatives: (1) that the journal is trying to maximize the average quality of its published papers or (2) that the journal is trying to maximize its rejection rate. The journal must decide both what threshold counts as good enough for their journal and also how much effort to invest in peer review. They can always make peer review better, but it comes at a cost (something that is all too familiar).

This already shows why judging journals by rejection rates can potentially be quite harmful. If a journal is merely striving to maximize its rejection rate, it doesn’t much care who it rejects. So, it has less incentive to invest in high quality peer review than does a journal that is judged by the average quality of papers in the journal. After all, if a journal only cares about rejection rates, it doesn’t much matter if a rejected paper was good or bad.

This already is probably sufficient to give one pause, but it actually gets much worse. In that quick argument, we implicitly assumed that there was a fixed population of authors who mindlessly submitted to the journal, hoping to get lucky. However, in the real world, authors might be aware of their chance of acceptance and choose not to submit if they regard the effort as not worth the cost.

A journal editor who wants to maintain a high rejection rate now has a problem. If they are too selective, authors of bad papers might opt not to submit, and a paper that isn’t submitted can’t be rejected. If a journal very predictably rejects papers below a given standard, their rejection rates will go down because authors of less good papers will know they don’t stand a chance of being accepted. A journal editor who cares about their journal’s rejection rate will then be motivated to tolerate more error in its peer review process in order to give authors a fighting chance to be accepted. They use their unreliable peer review as a carrot to encourage authors to submit, which in turn allows the journal to keep their rejection rates high.

We consider several variations on our model to demonstrate how this result is robust to different ways that authors might be incentivized to publish in different journals. We would encourage the interested reader to look at the details in the paper.

Of course, our method is to use simplified models, and in doing so we run the risk that a simplification might be driving the results. Most concerning, in our mind, is that our model features a world with only one journal. Philosophy has multiple journals, although in some fields of philosophy a single journal might dominate the area as the premier outlet for work in that area. Future work would need to determine if this is a critical assumption, although our guess is that it is not.

Although we don’t investigate this in our paper, we think that the process we identify might also exist in other selection processes like college and graduate school admission or hiring. In the US, colleges often advertise the selectivity of their admissions process, and we suspect that they face the same perverse incentives we identify.

Whether you share our intuition about this or not, we think the process we identify is concerning. If philosophy relies too heavily on rejection rates as a measure for journal quality or prestige, we run the risk of further degrading the quality of peer review. We think it is potentially problematic that journals sometimes advertise their rejection rates, lest it contribute to rejection rates being a sought after mark of prestige. Furthermore, we think it’s important that philosophy as a discipline walk back its use of rejection rates as a proxy for journal quality. To the extent that we are doing that now, it may actually serve to undermine the very thing we are hoping to achieve.

The pdf I downloaded says that various results are proven in the appendix, but there doesn’t seem to be an appendix. Where can I find the proofs?

Oh! I guess they don’t put online appedix into the online first part of PoS… I’ll have to ask Jim about this. Let me make sure I have the most recent version and I’ll put it online somewhere tomorrow. (Sorry, co-authors are probably asleep at the moment.)

Do people use rejection rate as a proxy for journal quality? I haven’t seen rejection rate mentioned in most of the discussions of journal prestige that I’ve seen – instead, they seem to rely on the sort of impressionistic judgments that are likely mostly informed by the quality of papers that people have seen come out in the journal. In fact, I’ve hardly seen rejection rates mentioned anywhere at all – I’ve seen claims that some philosophy journals have rejection rates higher than Nature or Science, but I’m definitely not aware of the rejection rate of any of the journals I am an editor or referee for, and I don’t think any of those journals are doing things to my review process that would affect their rejection rate!

Re: Philosophy. I don’t think there is any one thing that people use to determine journal prestige. Anecdotally, I’ve had or overheard this conversation:

A: I just got my paper into the Fancy Pants Philosophy Biennial Review

B: Oh wow! Don’t they only accept one paper a year?

A: Yes, I’m really excited

Our point of this post is more of an intervention. Let’s not do more of this.

Could editors influence rejection rates? Of course! Not only could they ask associate editors to be more stringent (something that definitely does happen), but they could also change the review process to make it harder to publish. For examples, some journals in the sciences will get three or more reviews and if anyone is negative, they reject.

Re: Other fields. It is quite clear that some other fields use rejection rates as a proxy for quality (at least in combination with other metrics). There was a recent episode where the editor of an econ journal boasted that they accepted zero papers that year. CS conferences are starting to advertise their rejection rates, and people are even putting this on their CVs. (My co-author Julian can comment more about this, I think he’s preparing a companion blog post for CS right now.)

The conversation you’ve overheard reveals that publishing in high-rejection journals gives authors prestige, but not necessarily the journal itself. If journal prestige means that people think the journal publishes good or important articles, then there does not seem to be a strong relation between the prestige of publishing in a journal and the prestige of the journal itself.

Hence, I’m not at all convinced that your results (though interesting) show that there is anything wrong with attributing prestige to authors who publish in high-rejection iournals.

Journals often use their rejection rates in their communication with aspiring authors and referees.

This is somewhat beside the point, but I’m pretty skeptical that most of us form our quality judgements in the journal rankings surveys based on the quality of the output we’ve read in those journals. Instead, I strongly suspect that, for most of the journals in question, most of us are mostly just reporting the ranking we’ve internalized. In other words, it’s mostly a reflection of established convention, with occasional adjustments to reflect new developments. (Yes, that’s a lot of ‘mosts’.)

For my part, for example, I really struggle when asked to rank PhilReview, Mind, Nous, etc., because they publish almost nothing in my main research area (aesthetics). Sure, when they do publish something, it’s pretty good, but so is most of the work published in the BJA and the JAAC, or in other well-ranked generalist journals. For any given year (/decade) when PR/M/N/etc. does publish an aesthetics article, I wouldn’t say that article is the best article in aesthetics that I’ve read that year (/decade), even if it’s very good. And while I occasionally read work in those journals that pertains to other areas, I’m not at all confident that it’s head and shoulders above the other stuff I read elsewhere.

I’m much more comfortable ranking generalist journals that regularly publish work I read, both in and out of aesthetics (e.g. AJP, Synthese, PhilStudies). But then, how should I compare these familiar outlets to relatively unfamiliar outlets like PR, but which I know “everyone” holds in very high esteem?

I suspect I’m not at all alone here, especially given how high the rejection rates are at journals like PR/N/M/etc., and the relatively narrow range of work they publish.

What’s the evidence that the editors at any philosophy journal aim at a high rejection rate as such, rather than aiming at a high average quality per paper published and knowing that a high rejection rate will be a side-effect of achieving that?

My impression is that the authors assume that treating rejection rate as a proxy for journal quality would incentivise editors to do that. This is so given that editors are incentivised to increase the perceived quality of their journal. Doing so increases their own reputation.

This is something than happend to me. More than once. I’ve received R&R verdict from reviewers but the editors explicitely stated that the reject the paper because they want to keep acceptance rates low.

I like the idea that when rejection rates are used as a proxy for quality, editors may be “motivated to tolerate more error in its peer review process in order to give authors a fighting chance to be accepted”. Here’s another idea about why it might be bad to use rejection rates as a proxy for quality: doing that might motivate journals to be opaque about the standards they actually apply to submissions.

Suppose that an editor is highly disinclined to publish anything with equations in it, or anything inspired by Wittgenstein, or anything meeting some other characteristic. If they advertise this fact, then that will disencentivize authors of those papers from submitting. As a result, the use of rejection rates as a proxy for quality might drive editors to *not* advertise such facts. The result would be increased opacity in standards for acceptance.

Definitely! This was something we thought about including, but decided the model was already complicated enough. 🙂

I agree, I think this is a real issue and perhaps one that is very serious for philosophy. The vagueness of the standards might actually (unintentionally perhaps) serve a purpose.

Did you consider what would happen if one is allowed to submit to more than one journal at the same time, perhaps as they do in Law Reviews? (Apologies if you covered that in the paper–I’ve only read this post).

We did not, in large part because in our model there is only one journal. (A serious idealization that we discuss.) But we do think the interesting next step would be to expand the model to include more than one journal. If we did that, then we could think about what might happen if one allowed multiple submissions. Like you, I’ve always been interested in that norm and what might change if it was abandoned.

Further to Kevin’s reply: if you tried to capture this idea within the narrow constraints of our model, you might represent it as a reduction in opportunity cost to authors. (Submitting to journal A is not foregoing the chance to publish my work quickly elsewhere.) This prima facie looks like a good thing, because it reduces the self-selection effect, which is what permits journals to invest less effort in peer review.

But I frankly don’t know if this is going to be robust once we explicitly model competition between journals.

And just on a more practical note: notice that this practice only seems to occur in a discipline where there is a large pool of cheap labour to do the reviewing: law reviews tend to be edited by students. I don’t think there is a lot of extra supply of quality reviewers in philosophy that we can tap into so readily.

Thank you both for these responses. And thank you for this important work!

It’s worth noting that, with law review submissions to US (*) law reviews, they are normally not peer reviewed (**), but rather reviewed by current law students, the review is rarely blind, and people typically send a copy of their CV. This tends to lead to “trendy” topics (i.e., ones that appeal to 2nd and 3rd year law students) getting lots of focus, and to “big names” having their papers accepted much more easily than others, and to people at “name brand” schools having an easier time getting papers accepted. There are exceptions, but these are clear and obvious trends. It’s not clear that multiple submissins would work if they were less like what’s done in law reviews and more like what’s done in peer reviewed fields. (Why would people bother to referee if the paper is also being considered at a number of other journals and the work could just be for nothing?) I think that both approaches have some virtues but it’s important to see that moving towards a multiple submission approach like US law reviews would involve some very radical changes.

(*) In some countries law reviews are more like normal peer reviewed journals. This is so in Australia, for example, where there is blind peer review and single submission, at least in most cases.

(**) Some US law reviews have put in some limited peer review, but have usually required single submission during that period, and the review is often more cursory. Some have also moved to a more blind review format, but this is pretty limited.

I really like the paper, but I’m concerned with the idealization of a single journal. If there were multiple journals, I would expect the behaviors of authors to change drastically. If the rejection rates at journals are high enough, and the number of journals is large enough, I would expect people to maximize the number of submissions to journals. If the rates are too high, people would essentially treat publication as a lottery, and the way to win a lottery is to buy a lot of tickets. Submission rates would increase.

Maybe I’m missing something here.

A bit confused about what this worry is: isn’t increased submission rates consistent with what we are seeing? It seems that (among other reasons) low acceptance rates have lead people to maximize submissions and so prioritize journals with quick turn around times. And I think we’ve already seen journals start to compete on turn around times (by adding editors, increasing desk rejections, etc.). I know I have pretty much written off certain journals due to the average length of their review period.

This going quite a bit beyond the model, but I’m curious what the worry is.

I guess it’s not really a worry. I’m moreso curious about the possible differences in predictions between single- and multiple-journal models.

I agree with you that this is a serious idealization that needs to be relaxed, something we think would be interesting future work.

I think your intuition depends on the ability of authors to submit to multiple journals at once, right? In case where that is prohibited the authors would still have to choose an order to submit in.

Rejection rate (or acceptance rate, which I hear mentioned much more often) is certainly an imperfect proxy for journal quality. However, for most journals, there is some number of papers p that they are able to publish per year due to space constraints. (The constraints might be set in terms of pages rather than papers, but that’s much the same.) If the journal gets s submissions, then in the long run the rejection rate is going to be (s-p)/s. In the short run it can be higher, by building up a backlog of unpublished (or published online only papers), but that can only go on so long. Of course, you could still have a poor journal with a high rejection rate if the few papers they accept are the wrong ones or if they receive many papers that are all mediocre at best. But the only way to have a good journal with a low rejection rate would seem to be having a journal that receives few submissions relative to the number of papers you’re able to publish. This could happen with an online journal that publishes a lot or a narrow specialty journal where not many people submit.

Isn’t this an argument for moving to online only, where space constraints do not apply?

Sure, it could be.

Publishing online isn’t free. There is still the cost of preparing the paper for being put on a website and organizing the site in a way that makes it easy to navigate. I think all journals face space constraints of one form or another.

Space constraints apply to online only journals as well as there are costs associated with publishing papers.

I’m not sure I follow. If a journal cannot publish more than p papers, why does that entail that the journal will publish exactly p papers? Our model suggests that journals would opt to publish less than that in order to either increase the average quality of papers published or increase their rejection rate.

I suppose that I was drawing on my own experience as an editor. (I edited Utilitas for 6 years.) I had an annual page budget from CUP. When I took over, I started rejecting more papers. (The scope of the journal had broadened over the years, and I wanted to bring it back to its original mission, so I was desk rejecting papers based on their not engaging sufficiently with the utilitarian tradition when my predecessors might have considered them.) Our backlog of accepted but unpublished papers got much smaller as a result, but I always came close to my page budget. If I couldn’t do that, I planned to call the experiment a failure and broaden the scope of the journal again. Prima facie I would assume my approach was pretty typical and that at least for established print journals, publishing far less than they’re allowed on a regular basis would be very unusual. Philosophers’ Imprint famously published very little when they first started up, since they were apparently worried about establishing their credibility as a novel online journal. Are there a lot of examples like that?

FWIW, I’m not terribly surprised to hear that rejection rates are higher at philosophy journals than at journals covering other disciplines. I don’t love this fact, but it’s just what I think one would naturally expect. I mean, almost anything counts as the submission of a “philosophy paper,” doesn’t it?

At the other end of the hierarchy are those journals with abnormally low rejection rates. In many cases this is commercially motivated as a number of journals (most unlikely to be in philosophy) pursue author fees. I have stopped reviewing in these kinds of journals because the reviewing process, such as it is, is almost completely nominal and papers get through with very little amendment despite serious flaws.

This paper (that I wrote) https://arxiv.org/abs/2303.09020 has another argument along rather different lines about why using rejection rates to measure quality is not a good idea. To summarize, agents self-select as to what/where they submit depending on how likely it is to be accepted. Thus, raising the threshold for acceptance may reduce the number of acceptances at a faster or slower pace than the number of submissions.

It is well known in computer science that the acceptance rate of a venue is not indicative of its quality. Our paper provides an explanation for this.

This paper was published in Economics and Computation last year, but an expanded version is on arXiv, which is why I linked to it.

I really liked this paper, but I also haven’t ever had the background notion that’s being challenged–simply never understood why one would draw a connection between rejection rate and journal quality in the first place.

I like the train of thought in this post/paper. I wonder if it assumes that authors know the quality of the submissions they write? Is that something that can be assumed safely?