Thinking about Life with AI

“What kind of civilization is it that turns away from the challenge of dealing with more… intelligence?”

That’s Tyler Cowen (GMU), writing at Marginal Revolution. He is addressing the “radical uncertainty” we should acknowledge regarding a future in which we’ve developed artificial intelligence (AI). Even if one does not believe that large language models (LLMs) could be a form of AI (recall the possible architectural limitation noted in the paper discussed last week), it does seem that at least the AI-like is here, will only get more convincing in functionality, and will likely bring substantial changes to our lives.

Cowen’s targets are those who are making broad judgments about the goodness and badness of these technological developments. He thinks we’re living in a transformational period—he calls it “moving history”—and our predictions about it should be informed by an appropriate degree of epistemic humility. He says:

Since we are not used to living in moving history, and indeed most of us are psychologically unable to truly imagine living in moving history, all these new AI developments pose a great conundrum. We don’t know how to respond psychologically, or for that matter substantively. And just about all of the responses I am seeing I interpret as “copes,” whether from the optimists, the pessimists, or the extreme pessimists… No matter how positive or negative the overall calculus of cost and benefit, AI is very likely to overturn most of our apple carts, most of all for the so-called chattering classes.

Of course, that AI is “very likely to overturn most of our apple carts” and will ultimately be as unpredictable in its effects as the invention of fire or the printing press is itself a bold prediction. But suppose we accept it. That we can’t be certain of what might happen doesn’t render speculation random or pointless.

So let’s speculate. I’m curious what changes, if any, you think we might be in for.

And let’s talk about how to speculate. I’m curious about how to think about these changes.

We might learn something from paleo-futurology, the study of past predictions of the future. One lesson appears to be that while some technological advances may be easy to predict, social changes are less so. Futurists of the 1950s, thinking about life in the year 2000, were able to anticipate, in some form, for example, video calls, increased use of plastics, and easier-to-clean fabrics:

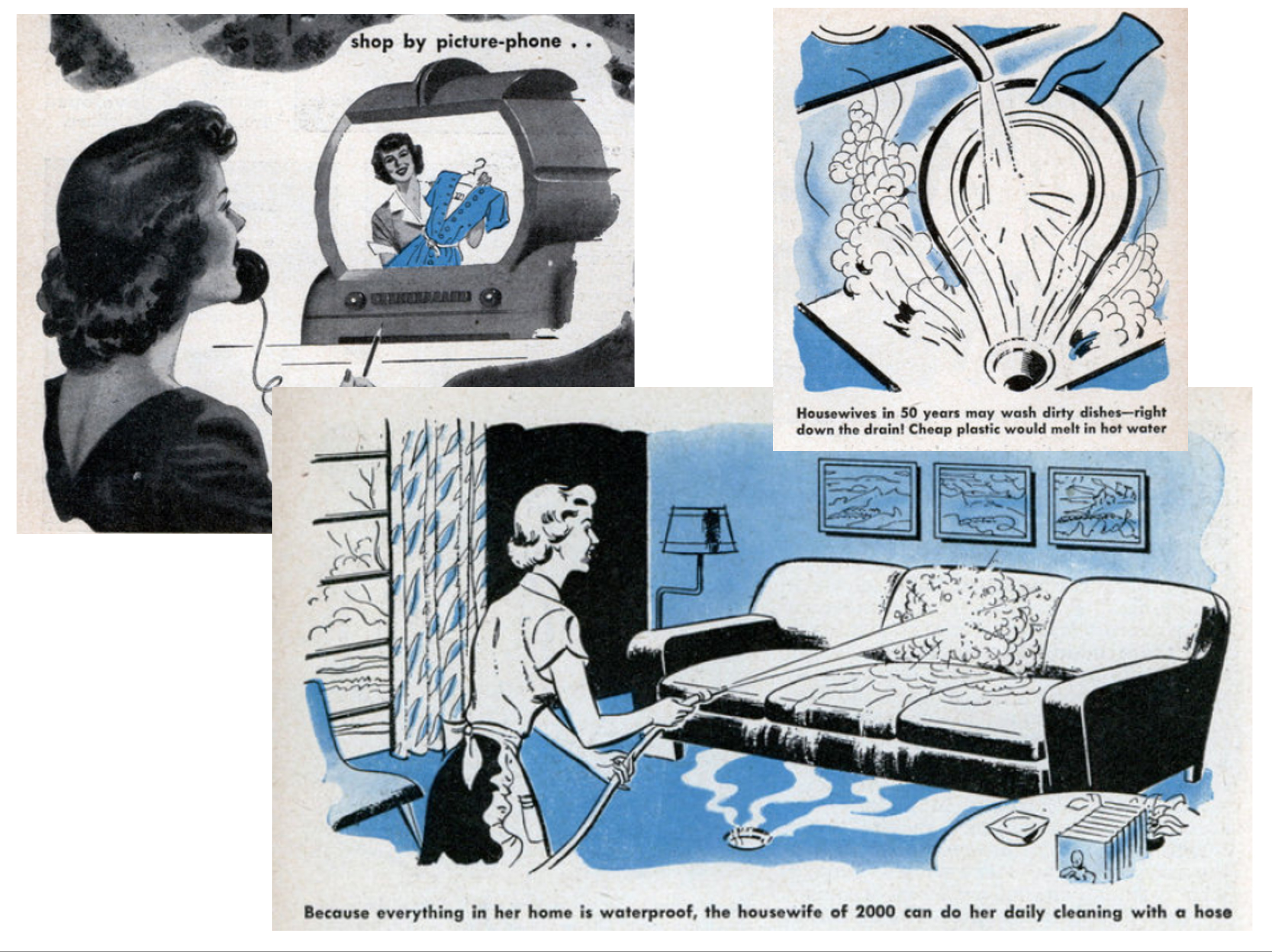

Some of the pictures that accompanied “Miracles You’ll See in the Next Fifty Years” by Waldemar Kaempffert, published in Popular Mechanics in February, 1950

Yet apparently it was not as easy to predict how odd it would be to relegate the shopping and cleaning to “the housewife of 2000”.

Technological changes affect attitudes and norms that in turn affect our expectations for various aspects of our lives, and those expectations have effects on how we live, what we think, the kinds of individual and collective problems we recognize, what else we are spurred to change, and so on.

So it is complicated, and so yes, let’s be epistemically humble. But let’s let our imaginations roam a bit, too, to explore the possibilities.

“So it is complicated, and so yes, let’s be epistemically humble. But let’s let our imaginations roam a bit, too, to explore the possibilities.” How would we grade such a covering-the-whole-logical-terrain feel-good prescription in a student essay? Not well, I surmise.

Hmm, I wonder if there’s a relevant difference between an essay written by a student and, I don’t know, Justin Weinberg posting on a blog?

Perhaps I should have been clearer, or perhaps you should have been more epistemically humble in imagining what the “whole logical terrain” is.

Justin, your description of the 1950 predictions image is overly charitable.

The dishwashing one says “Housewives in 50 years may wash dirty dishes—right down the drain! Cheap plastic would melt in hot water.” I wouldn’t describe this as “able to anticipate, in some form, … increased use of plastic.”

The sofa one: “Because everything in her home is waterproof, the housewife of 2000 can do her daily cleaning with a hose.” Predicts “easier-to-clean fabrics”? Come on.

These predictions seem to me ridiculous and probably make the opposite point to the one you’re trying to make.

It might be interesting to also consider these predictions of 2000 from around 1900, which include a “whale bus” and divers riding seahorses: https://publicdomainreview.org/collection/a-19th-century-vision-of-the-year-2000.

The images and predictions at that link are fantastic (and fantastical). Thanks for sharing it.

Maybe you are right that I was overly charitable about the 1950s technology predictions. Perhaps humans are as bad at predicting the technological aspects of the future as they are the social aspects. If so, that’s more reason for epistemic humility here, which is fine by me.

Maybe sticking to the context, not snipe-ing with straw man’s and red herrings, would be more productive.