New Site Presents Philosophy Job Placement Data

Charles Lassiter, associate professor of philosophy at Gonzaga University, has created a new site to provide job placement information about philosophy Ph.D. programs.

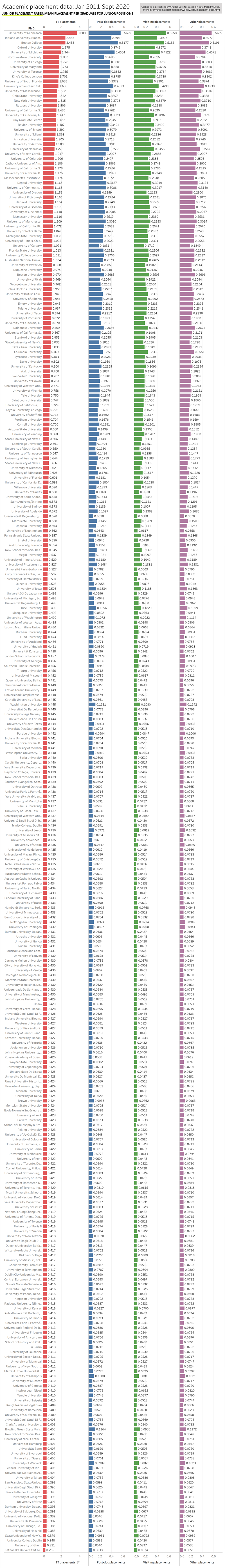

The site contains graphs and tables for departmental placements per graduate, junior placement rates, a searchable drill-down table where users can see where graduates of a program have historically placed, and more. It’s based on data from PhilJobs, downloaded information on graduation rates, and some assumptions, and covers the time period of January 2011 to September 2020.

Professor Lassiter calls it a “first attempt at coming up with a model for placement rates in philosophy,” and notes that in some ways it is not complete (check out the “Into the Weeds” section for more details on how it was made). He aims to update it every six months, in September (for job seekers) and March (for students headed into PhD programs).

You can explore the site here. Below is just one item from it: junior placement rates by department.

Does anyone know how info about placements ends up on philpapers.org? Are the departments providing the data or is it the candidates themselves?

You can update your employment status on PhilPeople (it shows up on the “Appointments” page of PhilJobs), which may be one source of data for this project.

Hi Harald,

SEC has it right. For this project, the info comes from (i) people posting their placements on PhilJobs and (ii) from profiles on PhilPeople.

I don’t understand, how can the number be larger than 1?

Maybe that indicates a single graduate accepts more than one job during the data period?

Hi grad student and Also confused,

Thanks for the question. The data are averaged over the last 9 years, during which time someone might have more than one position. If one person has an adjunct position, 2 post-docs, a visiting position, and then a TT position, that’s five jobs for one person. For privacy reasons, there isn’t an identifier attached to any of the postings, so it’s not possible to identify that it’s one person with five jobs. If that happens for multiple people in a department, then the ratio goes over 1.

Mea culpa: I just reviewed the code and saw that there is an appointee id in the initial data set. When prepping the data, I must have removed it to simplify the analysis. In the next update, I’ll control for multiple appointments.

That makes sense, thank you!

On another note, I think a warning may be adequate about postdocs in Europe…. Those numbers appear very, very low for some of the unis. On the other hand, TT job rates appear way too high – given that German academics typically go for multi-year post-docs and a habilitation before being eligible for TT jobs. Maybe a word of warning may be adequate.

While I appreciate the work that has been put in here, the data needs to be cleaned in order to provide a more accurate picture. Just as one example, there are multiple separate entries for Helsinki and Western (Canada).

Hi Alex,

I see two rows for Helsinki and one for Western in the summary visualizations. The reason there are two for Helsinki is because one is “University of Helsinki” and the other is “U of H, Dept of philosophy and religious studies.” In general, if there were additional descriptors attached to a university’s name, then I took that as an indicator that the department was distinct from the philosophy department. E.g. folks in the dataset distinguished between getting a PhD at University of California, Irvine and UC, Irvine, Logic and Philosophy of Science.

Hi again Charles,

Thanks for your reply. For Western, I see:

Western University

University of Western Ontario, Department of Philosophy and Rotman Institute

University of Western Ontario

Unless there is something I don’t know about the data, Western University is just a different way of referring to the same institution in London, Ontario. It’s been somewhat confused by the branding change of late, but the same school.

Something similar happens with UofT. The University of Toronto offers specialization programs that, I take it, are leading to graduates listing their interdisciplinary associations on PhilPeople. The UofT IHPST PhD is a standalone, but the entry for Department of Philosophy & Department of Jewish Studies is more than likely a Philosophy PhD with a Collaborative specialization in Jewish studies. This is different, for example, from the Pitt Philosophy/HPS distinction, which is akin to the Toronto Philosophy/IHPST distinction.

I have to guess a similar problem is at work in the following:

University of Missouri/University of Missouri, Columbia

University of Michigan/University of Michigan, Society of Fellows (a postdoc from what I understand)

University of Manchester/University of Manchester, MANCEPT

University of Helsinki/University of Helsinki, Department of History, Philosophy, and Art Studies (either could be distinguished from the Helsinki Practical Philosophy program if memory serves)

University of California, Berkley/University of California, Berkeley

unam (which is National Autonomous University of Mexico (UNAM))

The JHU entries, etc.

You see my point, I hope.

yep. I thought I caught all the errors, but clearly I missed some. Thanks for pointing them out. They’ll be fixed the next go-round

This was bugging me so I rechecked the cleaned up data:

1. U of Missouri appeared as “U of Missouri”, “U of Missouri, Columbia” and “U of Missouri, St Louis”. St. Louis has an MA in philosophy, with which someone can get an adjunct gig. So “U of Missouri” could have been either Columbia or St Louis. In an effort to let the data speak for themselves, I left them as they were.

2. I didn’t realize “society of fellows” was just a fellowship program. thanks.

3. U of Manchester, MANCEPT is a political theory org. I (perhaps mistakenly) thought that they were separate from philosophy.

4. Berkley/Berkeley — that’s my mistake. thanks for catching it.

5. there was only one entry for UNAM, so I didn’t think there was any harm in leaving the abbreviation. But I’m happy to fix it.

6. the various specifications of JHU, I initially thought, indicated different programs from which to get a PhD at JHU. (my inner Gricean argued, “why include the detail if it’s not conversationally appropriate?”). I think in the next update, I’ll cut the program specifications from JHU.

In general, my guiding principle was to play the data as they lie rather than tweak them. We’re only dealing with a few MB of data as opposed to GB or TB, so I didn’t see any pressing reason to streamline the names. I’ll have to give some consideration in the future to revising that principle. Thanks again for the feedback.

While this is a great service to the community, I worry about the distortions that arise from the comprehensive claim (“placement data in philosophy” without any specification). The data include many European universities. While it has become more common to post European jobs on PhilJobs, this a varies a lot from country to country, esp. on the postdoc level. Note also that not all European jobseekers post their appointments on PhilJobs. Take my own case: I graduated from a European University that shows up in the data. I am now TT at an American university, but I had 3 postdoc positions before in Europe. I did not post any of the postdoc appointments on PhilJobs. I also wonder whether Professor Lassiter has reached out to the professional organization in European countries to gather information, to ask about where jobs/appointments are announced there etc. I see a real problem here: the PhilJobs data include European data, but only a self-selected set of data. How can this be representative? You have a more comprehensive set of American data combined with fragmentary set of European data. I have thus my doubts about how meaningful the data are. But maybe Professor Lassiter has considered these issues…

Dear EuropeaninAmerica,

Thank you for your question and concern. In the future, I can add a note that the data are taken from PhilJobs and, for better or worse, the visualizations and analyses reflect any biases in patterns of posting.

For whatever it’s worth, I considered for reasons of accuracy excluding non-English-speaking programs from the analysis for the reason you mention: namely, it seems to be much more common in English-speaking countries to post to PhilJobs. But the short-term gain of a more accurate picture of a smaller number of programs seems less important than the long-term gain of a more inclusive analysis. If programs outside America, Canada, Australia, NZ, and the UK are left out at the start, I worry that it would make their future inclusion harder. (Why? The project depends on individuals logging their info. If folks don’t see themselves or their programs represented at the start, buy-in later down the line would be more challenging.) I decided to be as inclusive as possible with the data in the hope that it would encourage folks from non-English-speaking countries to log their placements, which can give us a better view of what’s going on in academic hiring in philosophy.

I haven’t reached out to any programs for their data; everything here is grabbed from PhilJobs. Trying to avoid letting the perfect be the enemy of the good, I made the analyses available hoping it might encourage individuals and perhaps departments to make the relevant information available, which would be better for everyone.

I don’t understand–hasn’t Carolyn Dicey Jennings/the APA already done a much more systematic version of this (which seems to involve contacting every known individual to graduate from a program, etc.)? (I think even her data is problematic and incomplete, but… it seems a lot less problematic and incomplete than this data. If the goal is just visualization, then why wasn’t her data used?)

Hi somebody,

CDJ has done outstanding work in gathering and organizing data about PhD programs with the APDA. Where that project and mine differ is that the APDA depends on survey responses where mine uses publicly available, user-contributed information. (NB that I tried to find the method details on the APDA website but couldn’t find them. So I’m confident but not certain that the APDA relies on responses to survey invitations.) There are advantages and liabilities to both approaches, but both suffer from worries about completeness.

Not to belabor the point but I don’t think that it’s right that APDA *only* relies on survey responses. Indeed it seems from what they say they rely on on the website that theyinclude public info, info from placement directors, etc.–I think we have pretty strong reasons to think that if that’s right, it’s better than the data on philjobs (since presumably she/they used that as one of the sources of info they were triangulating); but maybe she’ll chime in on this thread about this (I seem to remember it being discussed in earlier posts on DN, but I don’t have time to look those up right now). Here’s the info from the website about what they rely on:

program representatives, such as department chairs and placement officers, who have been provided a program-specific dashboard on which to correct and update entries for graduates of their program; individual graduates, who are provided their own dashboard through their email address, with personal and survey information that is not available to program representatives; and public websites, such as program placement pages and graduate websites.

Yes, APDA relies on more than survey responses, as it says on the About page at placementdata.com. Yes, our data set is much better than what is on PhilJobs; so much so that we use it as a source only as a last resort (because it has introduced so many errors to our data set in the past). We go through the APDA dataset by hand every year or two to correct it using multiple sources: the individuals themselves, placement directors, ProQuest, library dissertation listings, LinkedIn, etc. etc. There are many types and sources of error in this sort of data that can only be corrected through oversight. It is a lot of work and I think it makes sense to think about how important it is to our profession to have an error free data set. That is, how much error can we withstand, and how many resources are we willing to put into removing error? I know that we like to find problems; we critique, that’s what philosophers do. But this sort of project requires lots of practical action, and I don’t see as much of that in our community. Charles Lassiter seems to be offering something helpful here by having a nice visual presentation and an offer to update every 6 months. The data might have errors, but his model seems sustainable. I am hoping that I can find a way to keep the high level of accuracy that APDA aspires to sustainable, also, but the kind of work we are talking about costs thousands of dollars every year (not including a large chunk of my own research and service time) and it isn’t clear yet that this is the best model for a discipline of our size with all of the needs that we have. I think that for others to be actively thinking about ways of doing this type of work that are useful and sustainable as well as accurate is helpful. While I am disappointed that Charles doesn’t seem fully aware of what our project already offers, I suspect that is because we haven’t done as well as we can at APDA to communicate with the profession about what we have done. If people have advice on this, let me know!

Hi Carolyn,

Thank you for your response. A few thoughts:

1. I’ve been to placementdata.com several times from several IP addresses and couldn’t connect to the data or to the visualization tools. I figured that the project had closed out, but I was wrong and I’m sorry for this oversight!

2. Also, I couldn’t find anything on methodology on that same site or in the most recent report. Maybe I wasn’t googling the right phrases to find the mot recently updated page? Again, this may have been an oversight on my part.

3. You ask about “what makes for the best program” in philosophy. I think my research question may be a bit narrower than the ADPA’s since I’m interested only in placements.

4. The speed vs. accuracy tradeoff is an interesting point. My model can be updated every 6 months (or 3 or whatever the shortest length of time is that I can practically manage) because producing it is relatively cheap: it’s just me and my time, and I’m using openware to do my analyses. But, on speed v. accuracy, I think there are three open questions in this case: (1) whether speed is reasonably traded for accuracy in this instance, (2) how much more accurate your model is, and (3) whether accuracy *is* being traded for speed. I think, intuitively, the answer to (3) is that your model is more accurate since you’re drawing from multiple data sources. But again it’s not clear just how much more accurate it is, at least from the analyses I’m running. I’m sympathetic to the worry about which kind of model is the best. I think we have the same aim: providing early-career and aspiring philosophers with the data they need to make the best choices for themselves.

6. I’m not sure how to make your findings more visible. I’m trying to do the same and would love to support promoting the ADPA’s data in any way I can. 🙂

1. Sorry to hear that. I am not sure what has gone wrong there but I will look into it. The website works for me. Sometimes one tab fails to load, but if I refresh it works. I thought that was just me but maybe it has a coding error.

2. The about page has basic information about this, like the commentator and I say above. The “best program” post also has details about this. Here are selections:

“Entries on our website come from: program representatives, such as department chairs and placement officers, who have been provided a program-specific dashboard on which to correct and update entries for graduates of their program; individual graduates, who are provided their own dashboard through their email address, with personal and survey information that is not available to program representatives; and public websites, such as program placement pages and graduate websites. If you are a philosophy PhD student or graduate and would like access to your individual dashboard, but you have not yet received a link to your dashboard, please send an email to [email protected].”

3. I guess APDA has several aims now, including the one you mention, but it started out as just about placement information and that is still its main focus.

4. I know it isn’t obvious how different the accuracy is and I don’t have a numerical way of expressing this, I can just say that the PhilJobs data has lots of problems, and lots of types of problems. Our data is nearly complete and very accurate for all graduates of the years 2012-2018. From working on the project for many years, there is definitely a trade off for accuracy when you do things quickly/easily. You can see this in the reports we have written to some extent, because we talk about all of the things we have had to do to fix problems in data. I could describe this in more detail, but it would take a lot of time and I am not sure it is the most helpful thing for this thread.

6. Thanks, Charles.

The University of Minnesota! Go Gophers.

Probably a double count in the list: University of Leuven and Katholieke Universiteit Leuven.

I’m pretty certain there is no such thing as University of Leuven, but that name is sometimes used to mean Katholieke Universiteit Leuven.

(the list does contain a separate entry for Université Catholique de Louvain though, which is correct)

Keep up the good work.