Dunning-Kruger Discussion

A post by Blair Fix at Economics from the Top Down about whether the Dunning-Kruger effect (the inverse relationship between one’s skills in a particular domain and one’s tendency to overestimate them) is a mere statistical artifact, that I put in the Heap of Links last week, generated some discussion and prompted an email from a philosopher with a possibly helpful reference.

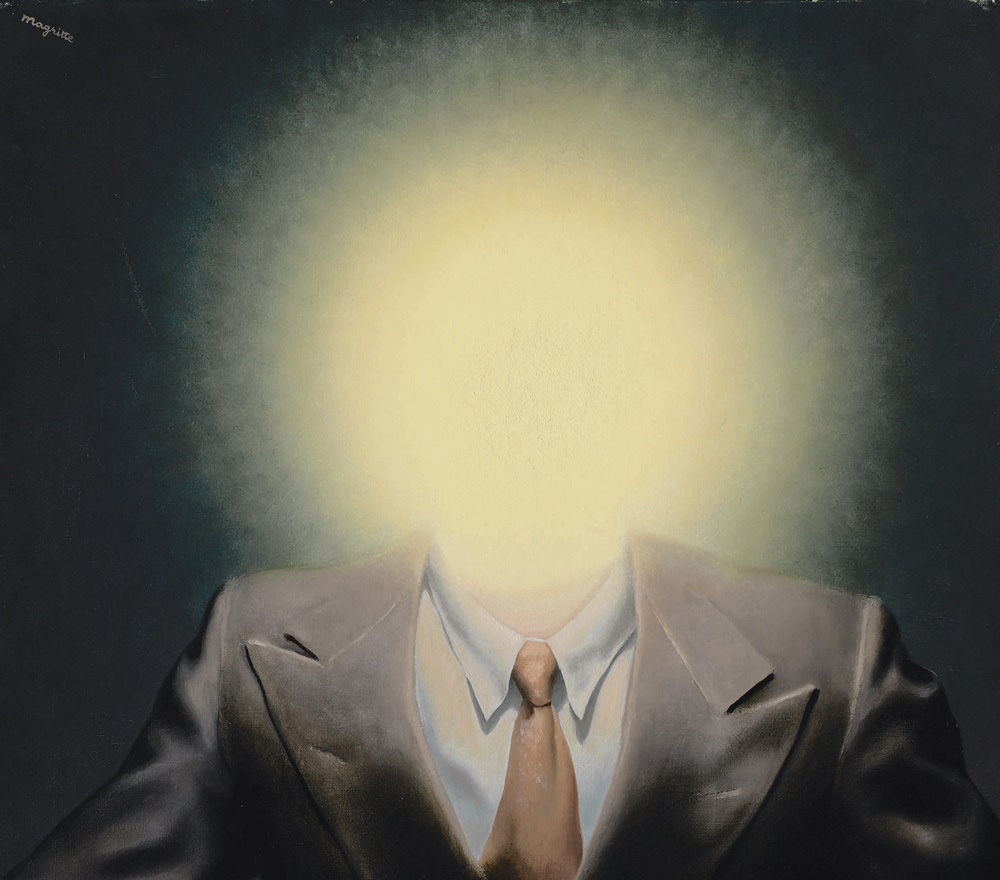

[Rene Magritte, Le Principe du Plaisir (detail)]

The claims made by Blair Fix in “The Dunning-Kruger Effect is Autocorrelation” are both vastly overstated and ones to which David Dunning has responded. Notably, [Joachim Krueger and Ross Mueller] Kruger himself* raised the original statistical complaint twenty years ago (with Mueller in 2002, Journal of Personality and Social Psychology), but Kruger also refutes that complaint in the immediately following article in the very same issue of the journal (with Dunning, 2002). The responses to the complaint seem compelling and there’s lots of further research which suggests the effect is fairly robust.

Here’s a brief excerpt from the Dunning response:

Often, scholars cite statistical artefacts to argue that the Dunning-Kruger effect is not real. But they fail to notice that the pattern of self-misjudgements remains regardless of what may be producing it. Thus, the effect is still real; the quarrel is merely over what produces it. Are self-misjudgements due to psychological circumstances (such as metacognitive deficits among the unknowledgeable) or are they due to statistical principles, revealing self-judgement to be a noisy, unreliable, and messy business?

The piece by Dunning is here.

Further discussion welcome.

* Correction: As Devin Curry pointed out in a comment, and as Amy Seymour acknowledged, this piece should have been attributed to Joachim Krueger and Russ Mueller, not Justin Kruger.

While it’s fair to say the jury’s out on Dunning-Kruger, no one is convinced by Dunning’s responses. Rather, it’s been rehabilitated to some degree by this paper.

https://www.nature.com/articles/s41562-021-01057-0

I don’t think philosophers should rely on it, given the current state of play.

Justin Kruger didn’t raise the statistical criticism himself–the piece Seymour cites is by Joachim Krueger and Mueller.

On the substance: I think there’s plenty of clear evidence of Dunning-Krugerish effects–while self-misjudgment abounds, there is a negative correlation between expertise and self-misjudgment–but Neil Levy may be right that we should suspend judgment about the Dunning-Kruger effect narrowly construed (i.e. that a lack of expertise is systematically correlated with overconfidence in particular, as opposed to misjudgment in general).

Goodness gracious; thanks for the correction, Devin! While I did some digging, it’s clear that I should have done far more in order to prevent things like conflating J. Kruger vs. J. Krueger! Perhaps the jury is out regarding what effect best explains that.

It would be interesting to hear why, according to Neil, “no one” is convinced by Dunning’s responses. I found something in the neighborhood plausible, at least. I’m often psychologist-adjacent, and the whispers I hear don’t seem to be ones of disdain given the other work Dunning has cited. (Though, of course, one is always limited in terms of their interlocutors!) The Edwards et. al 2003 paper Dunning cites toward the end of his response seemed particularly striking regarding the narrow construal.

Cheers, Amy. Without digging into the details, I can think of several possible explanations of the Edwards data. (Maybe there’s just a strong pull to expect a B-range grade, due to experience in the U.S. education system.) But I agree that Dunning’s explanation shouldn’t be ruled out.

The Dunning-Krugerish data you are talking about here doesn’t quite show a negative correlation between misjudgement of one’s ability and expert ability right? The worst performers misjudge the most and then steadily declines until a above median performance and then strictly increases again as you tend towards expert performer.

The general characteristics of this graph even hold for some relationships between having some property and misperception of having that property and can be explained by the same statistical artefacts and general cognitive biases. (Below is a graph from a political science paper with this form.) So I’ve seen psychologists like Yarkoni state the conclusion of the debunking as no stronger than that our default should be that it is explained by regression to the mean and the better than average effect. But the conclusion can gets much stronger than that on blogs and social media.

Yeah, I think there’s a negative correlation overall, but whether it’s a strictly linear relationship seems to depend on the domain.

I realized after posted that I probably just misread your comment I was replying. I thought your comment about misjudgements referred what would be the absolute values of the y-values in the graph I posted. But I now understand you to have meant to include a sign for whether one overestimated or underestimated. If that’s the case, I was just talking past you 😬

Sorry!

There are two distinct issues that get run together in these convos. What does it mean for the Dunning-Kruger effect to be real?

1) Roughly: something close to the figures produced in the original paper represent the correlations between ability and perceived ability in a variety of domains. (Maybe the data doesn’t reflect something real in a wider population.)

2) Dunning-Kruger’s original explanation for the effect, the one Dunning has spent a lot of the time since then trying to provide evidence for, is right

My understanding is psychologists agree that the effect is real in the sense of (1); it’s been replicated a ton.

What people mean when they say the effect isn’t real is that (2) is wrong. So the quote in this post from Dunning doesn’t really address the claim that the effect isn’t real in this sense but concedes it.

Here is a quote from the original article stating the explanation:

“…people who lack the knowledge or wisdom to perform well are often unaware of this fact. We attribute this lack of awareness to a deficit in metacognitive skill. That is, the same incompetence that leads them to make wrong choices also deprives them of the savvy necessary to recognize competence, be it their own or anyone else’s.”

The claim that it’s not real then imvolves denying this kind of explanation, often by explaining the data as resulting of statistical artifacts and general cognitive biases.

Now I am not saying it is or isn’t real in this sense since I haven’t seen whether the paper Levy posted rehabilitates the kind of explanation Kruger and Dunning were going for. And the explanation may also vary by task.

If the D-K effect isn’t real then a non-expert in D-K effects who isn’t aware of this would greatly overestimate their ability to spot the D-K effect (which should be 0), thus exhibiting D-K.

Actually, Dunning said something similar in one of his replies to his critics:

“But, perhaps unknown to our critics, these responses to our work have also furnished us moments of delicious irony, in that each critique makes the basic claim that our account of the data displays an incompetence that we somehow were ignorant of. Thus, at the very least, we can take the presence of so many critiques to be prima facie evidence for both the phenomenon and theoretical account we made of it, whoever turns out to be right.” (Dunning, 2011, 265)

Whether or not this was tongue in cheek, there is an interesting paradox that is generated when research on cognitive bias is thought to be subject to the very bias that it purports to discover. Joshua Mugg and I wrote an article about this recently:

Mugg, J., Khalidi, M.A. Self-reflexive cognitive bias. Euro Jnl Phil Sci11, 88 (2021). https://doi.org/10.1007/s13194-021-00404-2

And a blogpost:

https://iai.tv/articles/the-bias-paradox-auid-2086

3. And if I understand it right, the statistical effect is that there is a negative correlation between (estimate of skills – actual skills) and (actual skills). But even in its simple form, the D-K effect shows something stronger: that there is this negative correlation and that people usually overestimate their skills. The latter part is independent from the correlation – one could move down the y-line in the graph on Fix’s blog and obtain the same correlation, but now with people increasingly underestimating their own skills the more their actual skills improve, contrary to (my understanding of the) D-K effect.

Thanks to Justin Weinberg for this update, and to the many commenters for an interesting discussion.

I’m surprised no one is mentioning what (for me) is the strongest claim contra the D-K effect in Blair Fix’ article: that if you take 2 sets of completely random data and submit them to the same kind of analysis done by D-K in their original article, it would also show the D-K effect.

This, in my opinion, is what would make the original D-K claim really untenable.

Thanks for the interesting initial convo. Justin can cut me off anytime but fwiw here’s why I don’t find that argument compelling (am open to correction):

Strictly speaking, it doesn’t replicate DKish data, which show a characteristics asymmetry between the magnitude of overestimating in the lower quartile and that of the underestimation in the upper quartile. To get this you need to shift where the perceived ability line intersects the actual ability line to the right and this why Mueller and Krueger “introduce” the general better than average effect. (I use quotes there bc they are working with observed data and so actually just find the better than average effect and regression to the mean — an imperfect correlation bw two variables — in their data)

But to attempt to debunk DK, they don’t simply visually inspect the similar shape of their graph to the DK graph. That would be premature. If I understand correctly, they try to show that they cannot reject the null that their data is produced by regression to the mean and better than average effect by looking at metacognitive mediators

I discuss this paper not because I’m suggesting it’s the be all and end all on the topic, but it’s very clear on how a debunking would go. And in the paper they seem to suggest that social cognition may indeed play a role in explaining the exact pattern of data produced which is discussed by Dunning in the post linked above

So it seems to me that these recent viral posts on debunking the DK conclude far too much from the simulation arguments from people like Nuhfer

I’d also add that you have to decrease the variance in error as you move along the x-axis, which is what I now think Professor Curry was talking about rather than the binned erros in performance quartiles (sorry again!)

That seems like it probably has a non-statistical explanation? And if IIRC, MK also get that with the combination of what people have been calling a statistical effect (regression to the mean) and a psychological one (better than average)

But this is just my layman’s understanding of the basic picture from puzzling through it myself and there seem to be lots of wrinkles…

Yes. (And no apology necessary!)