New Site for Philosophy Journal Survey Project

The Journal Survey Project of the American Philosophical Association (APA) (previously) has been moved.

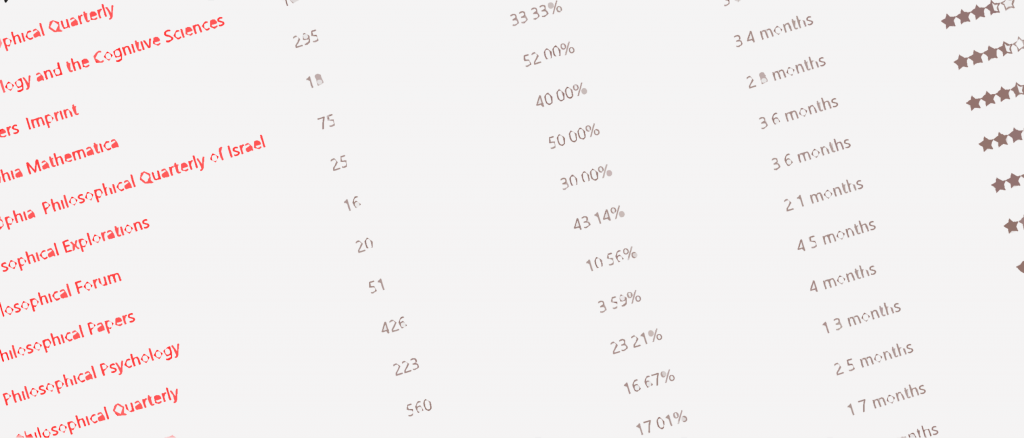

The Journal Survey Project collects data from authors about various philosophy journals’ acceptance rates, response times, and comment quality, and makes it available in spreadsheet format. It was originally developed by Andrew Cullison (DePauw) and then taken over by the APA. The APA’s previous iteration of the site had ceased accepting new data owing to its limited data allotment. The new site is here.

According to a post at the Blog of the APA, the new site was developed by the Center for Digital Philosophy at Western University, and put together by David Bourget and Steve Pearce.

I contributed faithfully to this project for years. I stopped submitting to it a year or so ago because my submissions never showed up. Even after the backlog was cleared, they never showed (I know because they were for journals–including the JAPA–which just didn’t show up in the survey at all).

As a result, I’m not especially inclined to keep contributing. I found it to be a useful project when it worked, but I need to see that it’s working before I’ll continue to spend time on it.

Seconded. Several entries I’ve made in recent years are not showing up on the current survey. This means potentially large amounts of valuable data have been lost and future submissions will be mixed with old data without accounting for the we don’t know how many years gap. Other than that, that’s a grear project and incredibly helpful resource. Just you just gotta hope that the APA will be more responsive this time.

I found a gap of about 1.5 years, from mid-2016 to about the end of 2017, when I played with this data back in the Spring:

I tried contacting folks at the APA to find out the cause, but nobody seemed to know.

It’s a shame the data is lost. But I don’t see mixing as a big problem; you can always filter out older data.

FWIW, when I emailed them, they said they’d run out of hosting space, or something similar. When it was first announced that the issue was resolved several months ago (if memory serves), I resubmitted some of my reports, including one for JAPA. There’s still no JAPA entry.

This isn’t meant to defend my current non-participation. It’s just to explain why I’m dispirited. Perhaps I’ll try again on a day when I wake up less grumpy!

Some funny looking results there. If J.Phil really accepts 1 in 4 submissions I would be… surprised.

That one’s easy to explain. There are three kinds of submissions: accepted, rejected, and under various stages of review. Only people whose submissions have been accepted or rejected report to the survey. So, really, what the data reports is the ratio of accepted to rejected articles, not the ratio of accepted to submitted articles. JPhil has a 1/4 acceptance rate because most of their submissions are in the third category, not the first two. For example, I believe they’re currently experiencing delays from an unanticipated backlog of submissions from the late seventies.

Almost all the acceptance rates seem unusually high. To have self-selected reporting is problematic. And as Shane mentions, a number of the results seem highly dubious. As the project stands it doesn’t look particularly helpful. Indeed, it might be spreading more falsehoods than anything else.

What I think might be most useful from the surveys is knowing the average turnaround time of a journal. Or, is there reason to assume that people with non-average turnaround times will report more often?

In any case, that’s the only thing I’ve ever used the service for. (Now I know never to submit to Metaphilosophy – assuming that the reports are somewhat representative.)

This is probably a naive question, but why doesn’t the APA simply ask the journals on the list to send the relevant data? Some journals publish this information once a year in one of their issues, and I imagine that even journals that don’t do that, keep a record of submission numbers, acceptance rate etc. Wouldn’t it be much easier to ask every journal to send this information once a year, instead of asking authors to complete a survey?

I second M’s question. One thing I really hate doing (and yet which our corporate universities require) is quantifying my research productivity. This means that every year I have to reach out to the journal or journals that I’ve published in that year and ask them to kindly provide me with acceptance rates, impact scores, citation rates, and so on.

Many journals will give you impact scores on their websites but almost no journals provide acceptance rates publicly. I wonder if any editors who read this blog would be willing to (even if only anonymously) explain why that is. Worse still is when my e-mail requests for this information go unanswered! That data is important to us (not intrinsically but because universities have *made* it such) and it would be extremely useful to have.

If the APA could leverage some of its weight to getting some sort of objective apples-to-apples data on even the most popular 50 journals, they would have done the discipline an enormous service.

ETHICS does publish acceptance rate, time to verdict, and some other information,, in the annual Editorial (in the October issue).

I agree that all journals should do so. It is not trivial, and not many journals are staffed as well as ETHICS is; but journals that have the information should publish it.

Yes, Ethics is one of the journals I had in mind. I agree it would be a good idea for other journals to do the same (and I hope that they will). But there is still the question of how the APA can best use its energies to make this information available. The existing survey seems unreliable for some of the reasons mentioned above. Getting in touch with the journals that do have the relevant data and making the data available on-line would be much a much more efficient solution, and a rather straightforward one. I’d be curious to know if there’s a reason not to go down this road.

Philosophical Review publishes all this data, as well as some of the data the Survey Project aims to collect, in something like real time.

https://philosophicalreview.org/statistics

Journals that are run well give (a rough) acceptance rate in the rejection emails. Here are two I have received in the past year or two:

Analysis: “The journal’s acceptance rate is lower than 8%.”

Bioethics: “At the present time only the top 15% of papers received are accepted for publication…”

I think these emails are much more useful than rejection letters I have received from some (double but not triple-blind) top 20 general philosophy journals such as this one: “The decision [desk-rejection] should not be taken as a reflection of the quality of your paper.” I wonder, on what grounds these journals publish/reject papers if not based on the quality of the manuscripts…

Some journals give the info on their website, such as Journal of Medical Ethics (Acceptance rate 30% in 2017, Instant rejection rate 53% in 2016. Time from submission to first decision 43 days, Time from acceptance to online publication 22 days).