Custom Student Evaluations Of Teaching

Maybe, just maybe, if more of the comments on our student evaluations looked like the following, they’d be worth it:

9.5/10

would take again.

I just realized that rhymed

And now I’m feeling sublime.

They call me Bertrand Russell

You know, I’m all about the hustle

Sense data is my life, it’s also yours, this ain’t a puzzle.

I get recognized as “Ayer”,

and “A true fuckin’ player”

but those terms are interchangeable my bad, ’twas a tautological err

They sometimes call me Quine.

I got bars, I got lines.

I’ll eradicate your whole existence, (What existence?) it’s not in our space and time.

I’m known round here as Kripke,

The king of New York City

They tried to bind me to their laws, too bad their laws weren’t explained to me.

Aristotle, Plato, Socrates, Machiavelli, Mephistopheles

Metaphysics is our business. Our swagger’s in our Philosophies!

That’s a comment Daniel Harris (Hunter College) once reported receiving in the comments on a previous post about student evaluations. Though individual comments on student evaluations of teaching (SET) can be amusing and sometimes helpful (along with occasionally offensive and frequently dull), there has long been doubts about how informative and useful SETs are. (See, for example, this amusing, if predictable, finding.)

A new metastudy, published in the September 2017 issue of Studies in Educational Evaluation, drives another nail into the coffin. It shows that previously marshaled evidence about the correlation between student learning and student evaluations of teachers were the result of studies with small sample sizes and publication bias. Here’s the abstract:

Student evaluation of teaching (SET) ratings are used to evaluate faculty’s teaching effectiveness based on a widespread belief that students learn more from highly rated professors. The key evidence cited in support of this belief are meta-analyses of multisection studies showing small-to-moderate correlations between SET ratings and student achievement (e.g., Cohen, 1980, 1981; Feldman, 1989). We re-analyzed previously published meta-analyses of the multisection studies and found that their findings were an artifact of small sample sized studies and publication bias. Whereas the small sample sized studies showed large and moderate correlation, the large sample sized studies showed no or only minimal correlation between SET ratings and learning. Our up-to-date meta-analysis of all multisection studies revealed no significant correlations between the SET ratings and learning. These findings suggest that institutions focused on student learning and career success may want to abandon SET ratings as a measure of faculty’s teaching effectiveness.

In short, the study, by psychologists Bob Uttl and Carmela A.White of the University of British Columbia, and Daniela Wong Gonzalez of the University of Windsor, concludes that “ratings are unrelated to student learning.”

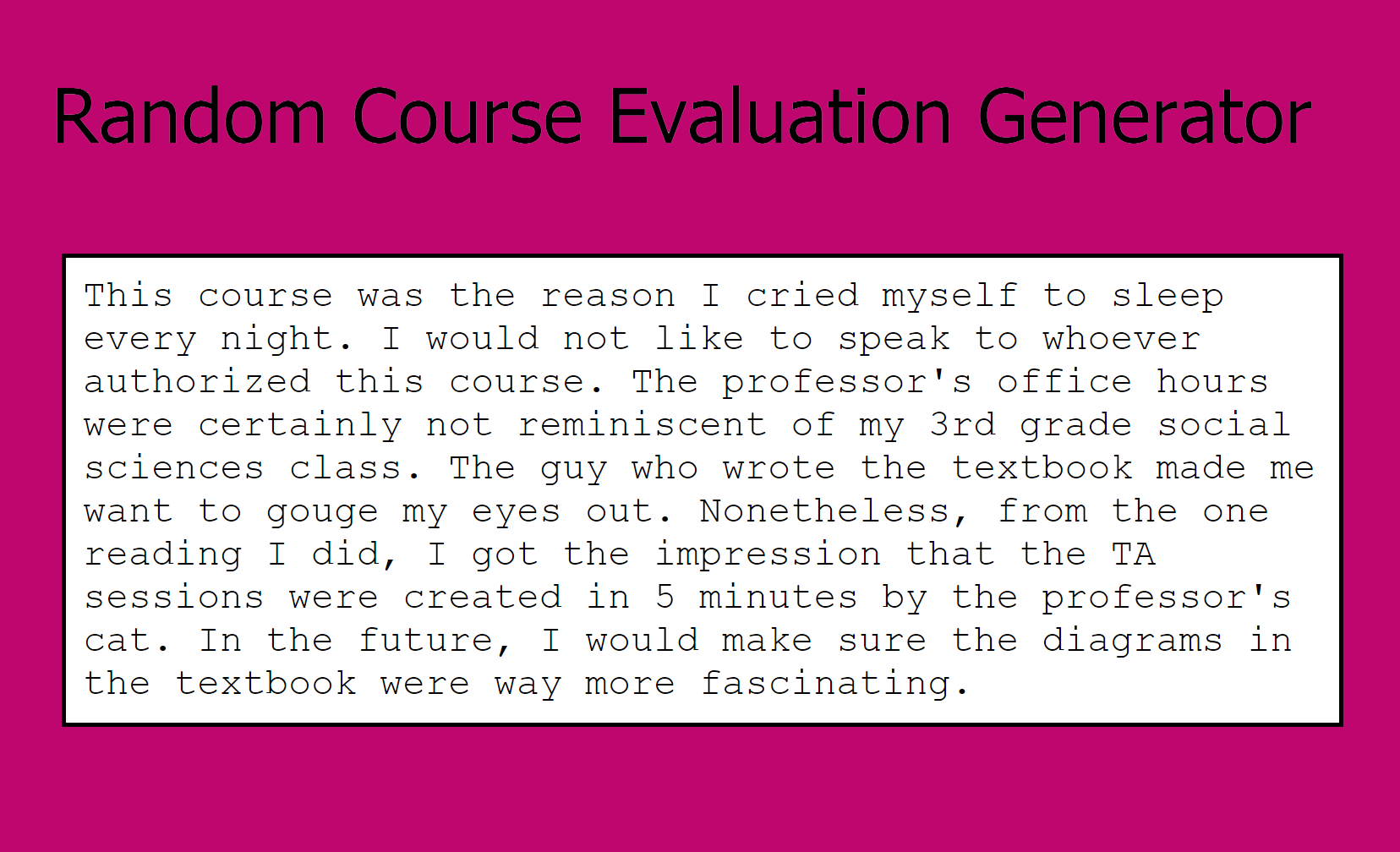

The students might as well be using the random course evaluation generator.

In a post here about two and a half years ago, I asked how philosophy professors thought they should be assessed by their students. I think the topic is worth revisiting. Professors can distribute their own custom course evaluation forms at any time throughout the term, should they care to, and sometimes departments can have a say over which questions are asked of their students in the official term-end SETs.

This will involve figuring out what we want to know from our students. We should have no great expectation that our custom questionnaires will do a better job of reporting on teacher quality in general than the ones studied. But we can ask more specific questions that get at student understanding and their impression of what you and your course are like. They can emphasize substantive feedback rather than grading you with a number.

So, what do you want to learn from your students about your teaching? And what’s a good way to do so?

And if you already distribute your own custom course evaluation, please consider sharing it here (either by linking to an online version of it, cutting and pasting the text, or emailing me the document.

This is not *exactly* on point. And it’s entirely self-serving. But I’ve found it useful to include the question “Would you like to nominate your professor for a teaching award” in my evaluations. It gets me nominated for teaching awards more.

I guess it’s maybe not too far off point. It is a question I want to know my students’ answers to…

I have a quick question about one of the studies (by Buchert et al. 2008) allegedly undermining the merit of strong student evaluations: If it was found upon close analysis that a gold medal sprinter had reached the same velocity two meters into the 100 meter race that he/she had when crossing the finishing line, would we remove the medal or think any less of the performance?

Evaluations give students a chance to report any major misstep on the part of the prof, so if in the end they don’t report any, then surely that has to count for something.

As for the realization that appearance matters, one cannot welcome the embodied turn in cognitive science and philosophy of mind plus insist on live classroom teaching over remote technologies while at the same time deploring the fact that we are not hiring disembodied intellects.

There are no doubt reasons that tell against using course evals. I think the influence of biases is a big one. And I initially felt a happy feeling of, yay, this meta study is another reason: lack of correlation with learning outcomes. But then I worried I would be inconsistent to recognize another reason here. When I’ve been told by online learning companies trying to interest my school in their service that studies show their courses comparing favorably in learning outcomes to in-person teaching, I point out that they don’t have studies for anything remotely like philosophy, or really anything writing and critical thinking focused. And I don’t find their studies of such different classes relevant to my philosophy courses, or to serious college level courses in many other fields that are not CS, math, sciences, or low level foreign languages. So is there evidence in the meta study to think that evals for Seminar or section leaders fail to correspond to learning in courses more like philosophy? Happy to hear so, if so.

I have two words in response: peer evaluation. Students can be bought off with inflated grades–and faculty can thus claim the highest evals in the dept and be loved by admins for burgeoning enrollments–but those providing such can be absolutely unqualified for standing and delivering. How can one know? Have tenured peers review them teaching classes on TT at least four times by different faculty, and sometimes twice by faculty who express concerns. (I have done the latter; that was done to me way back when as well.) Lecturers need periodic review like that too. And grad student TAs with full-class responsibilities would likely benefit from in-class faculty review (I had no such review for my 3 years in that role but could have used it).

This is mostly an anecdotal comment but I have had experience in culling out bad faculty–TT and lecturers–based on peer review and in spite of “excellent” SETs. Somebody has to be guarding the guards.

Honest question: are the data on peer evaluations of teaching any better (or worse) than SETs? In other words, how confident should we be in our ability to evaluate whether or not a professor’s teaching affects (positively, negatively, or neutrally) student learning (as opposed to student engagement or student enjoyment)? Given other data on philosophical expertise, I’m not ready to trust my own judgments about whether someone is a good teacher.

Good question and I know of no such study. That’s why I labelled my comment as from my own point of view, based on a lot of experience. In partial reply I would say that having multiple peer reviews helps compensate for deficiencies that might arise from one particular review (either by missing something or being too kind).

I’ll also take a moment to apologize to Justin for a partial thread hijack since I offer no particular advice on self-administered SETs. But I am concerned that the profession needs something besides official SETs to evaluate teaching, and in that spirit I offer peer review as part of a solution.

So most schools don’t care about teaching evaluations as a means to measure the true objective merit of effective teaching or something like that. They care about the teacher’s abilities to attract students and increase enrollment. That said, I personally think the claim that high evaluations represent easy grading is way overplayed. I have known plenty of easy graders that students did not like, as well as the reverse.

Two things are getting muddled here. Student evals seem like a not-useful way of comparing the quality of different teachers for purposes of raises, promotion etc. (Btw, I do not think that the studies show this, I think they are quite consistent with the claim that evals, as part of a larger evaluation system which include peer judgment, do help us distinguish very bad teaching from the rest). That does not mean they do not supply useful information to the teacher themselves about how to improve the quality of their instruction. Of course, the raw numbers are pretty close to useless for this. But the comments can be very useful. In particular, in large classes, when several students pick up on the same positive/negative, the reader can look carefully at that. For example, if it is an irritating verbal tick, or a tendency to fluff responses to certain kinds of questions, they can get themselves recorded, check out whether they indeed do it, and work to correct it. One of the reasons student evaluations are useless is because we don’t bother to use them.

The thing about peer evaluations is this. You want to be evaluated by expert evaluators. So peer evaluators need protocols, and training. and discipline. Most peer evaluations don’t do this.

An anecdote. I was struck when on a divisional committee by a particular case in which I read every single student evaluation and every single peer evaluation. The peer evaluations were inflated nonsense. The student evaluations, semester after semester, picked up on the same, easily corrected, flaws. Neither the peer evaluators nor the instructor were taking the students the slightest bit seriously. It was really appalling.

I suggest getting rid of numerical fill in the bubble evals, and replacing them with pointed questions requiring discursive answers, and an open-ended component. And a requirement that teachers meet with a randomly selected group of students a week after grades are in, and read the comments with them…..

Thanks, Harry. I think your suggestions about student evaluations are good ones. UBC has had a similar experience with peer evaluations to the one you mention. Apparently UBC psychology is one of the only faculties that tend to get critical peer reviews, and that’s because they’ve moved to anonymous reviewing. (The instructor’s course is recorded and viewed by the peer reviewers; the instructor doesn’t know who they are). One thing sorely lacking here is training for peer evaluation – do you know of any good references on this?

Thanks,

Harry, as usual, very reflective and useful comments on evaluating teaching.

In partial reply to your very good point about the need for training peer evaluators, as I think about it, in effect my department has a de facto ongoing training program. Here’s why.

First, my department–which incidentally has historical ties to yours–in the old days your chair was ours too–and in fact my entire institution, has senate rules that require at least 2 peer reviews every other year while on the tenure-track (this has been policy since the 70s). So most faculty are reviewed at least 6 times–most more than that.

Second, our department in particular has an executive committee constituted by all tenured members (not all departments do this). What this means is that all our tenured faculty are involved at least by consultation with the status of every TT member, and most peer review every TT.

Third, so, over the course of the 6-year review of every TT member there is an ongoing dialogue within the executive committee (in closed session by law) about the reports that peer reviewers provide. This allows discussion of the merits of a probationary faculty member from very different points of view, but also allows tenured faculty to reflect on how they conduct peer reviews, what to look out for, and what to prioritize about teaching. Each reviewer has been thus (for example) historically encouraged by senior faculty to offer suggestions for improvement. While there is no formalized format for peer review, over time we have evolved an informal structure of the report that is first learned from the reports while on TT, and is reinforced when one is tenured and is involved in making tenure decisions.

Fourth, therefore, over time there is a tendency for everyone who is tenured to learn criteria for breadth and depth of coverage, effective means of interacting with students, and what constitutes sound evaluations of student work.

While this is just an artifact of our institution’s general commitment to peer evaluation, and reinforced by our own situation of having every tenured member knowledgeable about every case, the overall result is an ongoing training exercise on how to conduct peer review.

Again, I apologize to Justin if this is just too far afield from the OP, but these are important issues.